Not every API promising GPT-5 access actually delivers it. A new paper from the CISPA Helmholtz Center for Information Security audited 17 shadow APIs widely used across thousands of projects and found troubling evidence that many resellers substitute official frontier models with weaker alternatives. The study, titled "Real Money, Fake Models: Deceptive Model Claims in Shadow APIs," raises serious questions about transparency and reliability in the booming third-party AI reseller market.

Shadow APIs Underperform Official Endpoints by Up to 47%

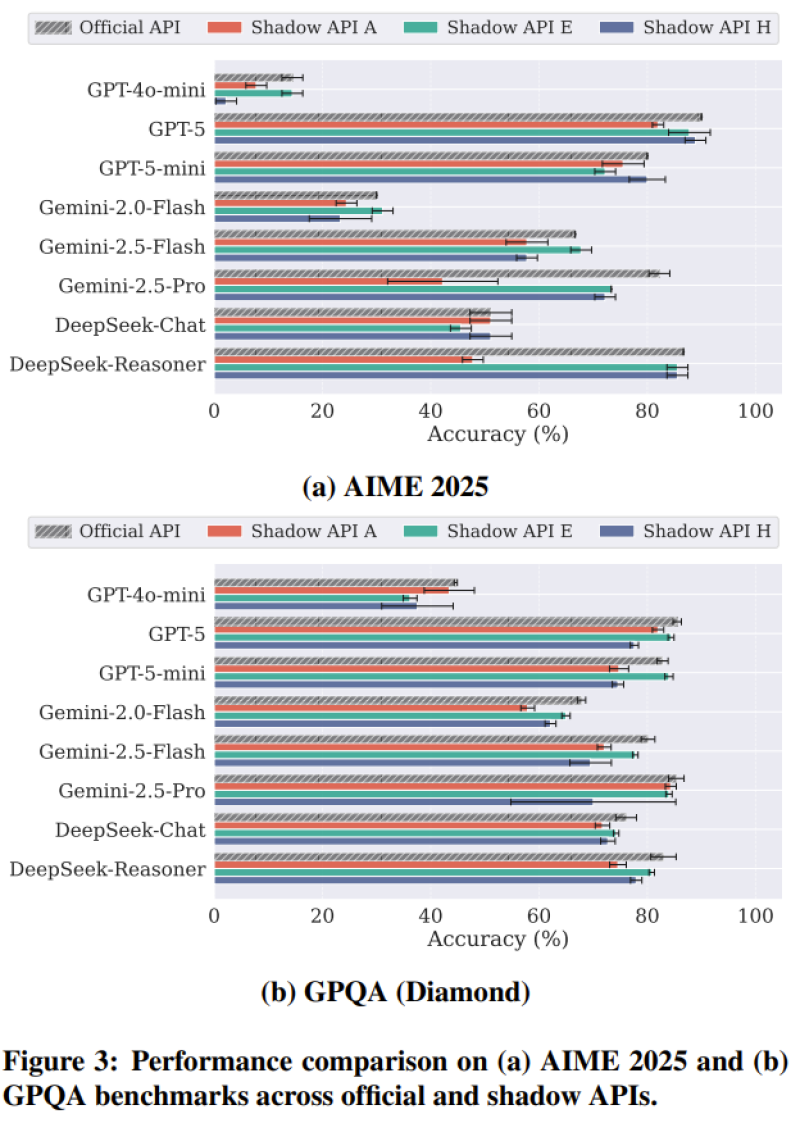

Researchers tested shadow services against official APIs across major benchmarks including AIME 2025 and GPQA Diamond. The results were stark: official model endpoints consistently outperformed shadow implementations, with some diverging by as much as 47.21 percent.

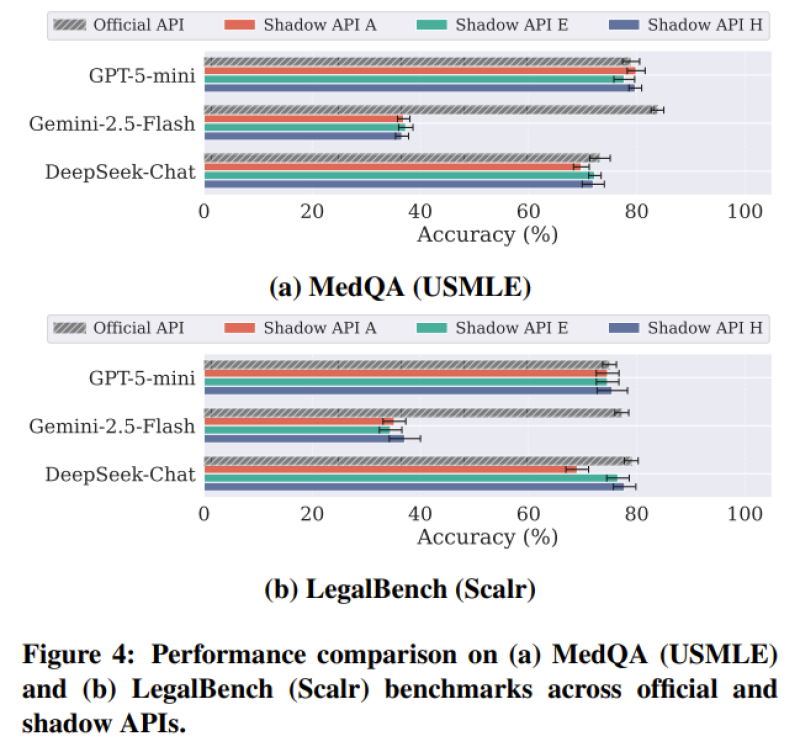

The gap was not limited to general-purpose evaluations. Domain-specific benchmarks like MedQA (USMLE) and LegalBench (Scalr), designed to measure medical and legal reasoning, showed similarly weak results from shadow providers.

The scale of the problem is underlined by identity verification failures: nearly 46 percent of tests suggested that services claiming to deliver frontier models were instead routing requests through smaller or modified systems.

For context on how much reasoning performance has advanced in legitimate settings, research describing how an 8B AI model achieved a 94.5 benchmark score using the PaCoRe framework illustrates what optimized, transparent architectures can achieve even at modest model sizes.

Real Costs, Hidden Risks for Developers and Enterprises

The commercial stakes are growing fast. Shadow APIs are not fringe products -- they are integrated into thousands of active developer projects. When these pipelines silently deliver inferior outputs, the consequences ripple outward: compromised research findings, faulty product decisions, and eroded trust in AI-driven applications. On the infrastructure side, legitimate providers are building tools to address scale and cost at the same time, as seen in recent updates describing how xAI introduced Grok Batch API processing for large-scale workloads, enabling asynchronous handling of large request volumes at reduced cost.

The broader economic context adds urgency to the authentication problem. AI systems are now embedded in real revenue-generating workflows, as illustrated by recent reports about an AI system generating $10,000 in just seven hours through real task execution. As commercial reliance on AI deepens, ensuring that organizations actually receive the models they pay for will likely become a defining challenge for the industry in the years ahead.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi