A research team from the Chinese Academy of Sciences has developed BitVLA, a 1-bit vision-language-action model built specifically for robotic manipulation tasks on constrained hardware. As Simplifying AI reported, the model shrinks a robot's AI system by 11x, allowing deployment on low-cost devices without any reliance on cloud infrastructure.

The model reduces a robot's AI system size by 11x, enabling deployment on low-cost hardware without relying on cloud infrastructure.

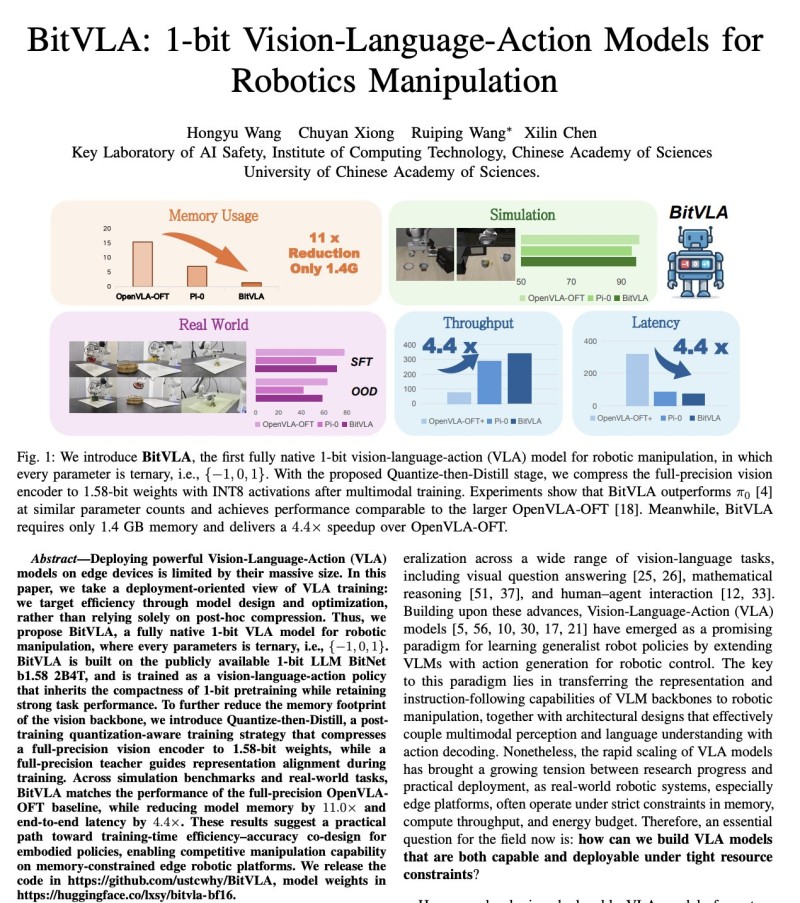

What makes this possible is a fundamental change in how the model stores information internally. BitVLA compresses its weights into ternary values - -1, 0, and 1 - stripping away the numerical complexity that typically drives up memory demands. The result is a system that requires just 1.4GB of memory while holding its own against full-precision models in both simulated environments and real-world robotic tasks.

How BitVLA Achieves 4.4x Faster Robotics Performance

Memory savings are only part of the story. BitVLA also delivers a 4.4x increase in throughput and a matching 4.4x drop in latency compared to baseline models like OpenVLA-OFT. In practical terms, this means robotic systems can react and move faster, all while running on hardware that would have struggled with previous-generation models. The gains come directly from simplifying the math the chip has to do at every inference step.

This kind of edge-ready performance has real consequences for how robots get built and deployed. Systems that once needed a data center connection to function can now carry their intelligence locally - making them cheaper, more reliable in low-connectivity settings, and easier to scale into consumer or industrial products. The 3 Open-Source Robotic Hands Now Sense Touch in Real Time project points in the same direction: capable hardware is getting lighter and more self-contained.

BitVLA and the Shift Toward Lightweight Robotic AI

BitVLA fits into a broader pattern across the AI industry. The push to make powerful models run locally - without expensive GPUs or cloud subscriptions - is reshaping what's possible in robotics, edge computing, and embedded AI. This is a trend visible in how xAI Hiring Engineers While Training Grok Code on 1 Million H100 GPUs reflects the opposite end of the spectrum: frontier training at massive scale, while deployment moves toward minimalism.

At the same time, architectural choices are evolving at the system level too. The reasoning behind Why Multi-Agent AI Systems With Specialized Roles Are Outperforming Single Models mirrors what BitVLA demonstrates in hardware: specialization and efficiency often beat raw scale. A model that does one thing extremely well on minimal resources is increasingly competitive with a generalist running on expensive infrastructure.

Advances like BitVLA point to a future where robotics can function independently of expensive infrastructure.

Key capabilities demonstrated by BitVLA include:

- 11x reduction in overall model size through 1-bit ternary quantization

- Memory footprint of just 1.4GB - deployable on consumer-grade hardware

- 4.4x throughput increase over baseline models such as OpenVLA-OFT

- 4.4x reduction in latency, enabling faster real-time robotic response

- Comparable accuracy to full-precision models in simulation and real-world tasks

- No cloud dependency - fully local, edge-ready execution

For robotics engineers and AI researchers, BitVLA represents a credible path to deploying capable manipulation models in cost-sensitive environments. As 1-bit and low-bit quantization techniques mature, the gap between edge deployment and cloud-grade performance looks narrower than it did even a year ago.

Victoria Bazir

Victoria Bazir

Victoria Bazir

Victoria Bazir