Researchers have introduced ReBalance, a training-free framework that tackles one of the most overlooked inefficiencies in modern AI: models that think too much or not enough. The system dynamically adjusts reasoning depth in real time using confidence signals, cutting token usage while keeping answers accurate. As DailyPapers reported, the approach requires no additional training and works directly at the inference stage.

Overthinking vs. Underthinking: Why Token Balance Matters

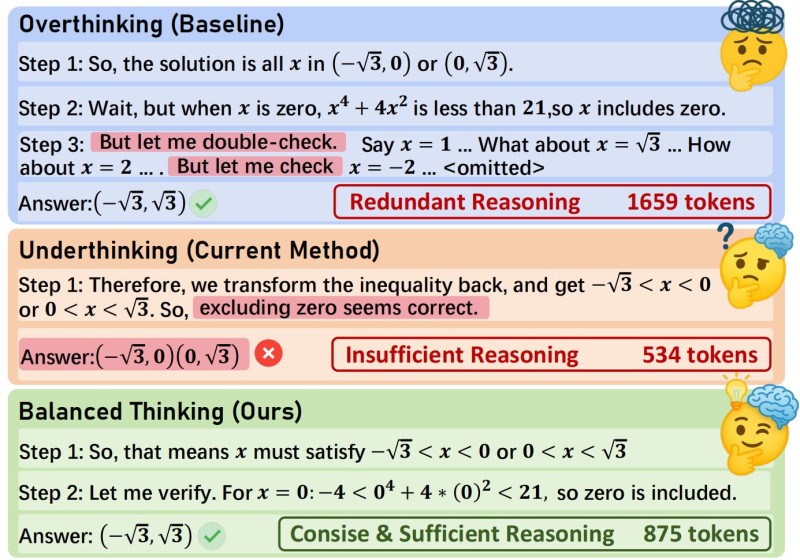

The problem ReBalance addresses is well-defined. Unconstrained models often burn around 1,659 tokens on intermediate steps that add nothing to the final answer. Strip too much away, and you get a lean 534-token response that gets the answer wrong.

ReBalance finds the middle: roughly 875 tokens per query, enough to keep essential verification steps without redundant expansion. The framework isolates which steps actually matter and skips everything else, a targeted approach that mirrors efforts like AI ecosystem sees Nanobot cut agent code by 99%.

A Broader Shift Toward Smarter Inference in AI Systems

ReBalance is part of a wider turn in AI development away from simply making models bigger and toward making them smarter at inference time. Other recent frameworks share the same priority: AutoGeo's 2-model AI framework rewrites content to improve AI search visibility without scaling model size, while research into why multi-agent AI systems with specialized roles are outperforming single models reinforces that smarter design beats raw scale. ReBalance fits squarely into this shift: by treating reasoning depth as a variable to be managed rather than a fixed output, it points toward a future where inference efficiency is a first-class metric alongside accuracy.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi