Moonshot AI has unveiled Attention Residuals, a new architectural method that replaces fixed layer accumulation in large language models with dynamic, attention-based selection, significantly improving long-range reasoning and output stability at scale.

Why Standard Residual Connections Fall Short in Deep LLMs

Traditional LLM architectures depend on residual connections that accumulate outputs from all previous layers using fixed weights. As researcher Rohan Paul highlighted, this design leads to uncontrolled growth of hidden states and the progressive dilution of early-layer information as models scale deeper. The result is degraded long-context reasoning and unstable gradient behavior at depth. This matters beyond benchmarks: AI systems are already generating $10,000 in 7 hours through real work tasks, and the reliability of deep reasoning directly determines what those systems can actually deliver.

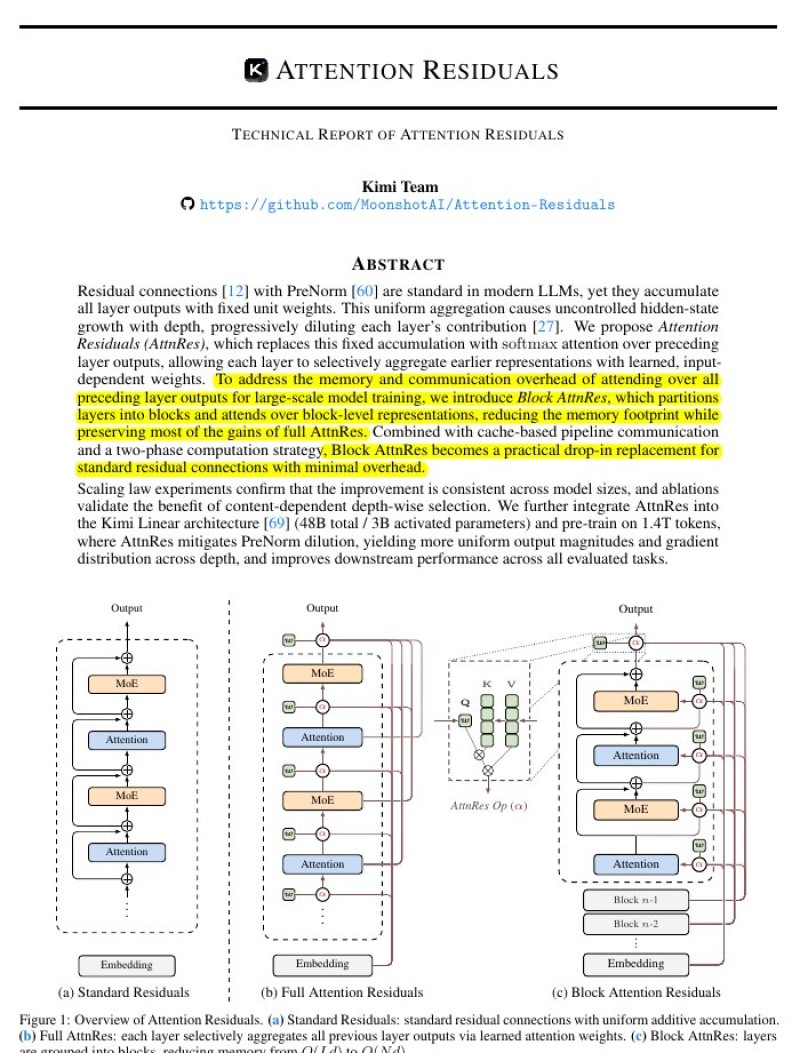

Moonshot AI's technical report addresses this directly. Attention Residuals replace fixed accumulation with a learned, attention-based mechanism that lets each layer dynamically retrieve the most relevant past representations.

By replacing fixed accumulation with dynamic selection, the approach addresses a core limitation in deep neural networks and supports more complex reasoning tasks.

Rather than inheriting everything from every prior layer equally, the model selectively recovers the signals that matter, preventing critical early information from being buried under later noise.

Block Attention Residuals Cut Memory Costs Without Sacrificing Performance

Attending over all previous layers simultaneously comes with a steep memory cost. To solve this, Moonshot AI introduced Block Attention Residuals, which group layers into blocks and apply attention at the block level rather than across every individual layer. This reduces memory overhead while preserving most of the performance gains from full attention-based aggregation. The method has been integrated into the Kimi Linear architecture, where models with tens of billions of parameters showed measurable improvements in output stability and gradient distribution. This builds on Moonshot AI's broader push in adaptive AI, including the multi-agent capabilities demonstrated in Kimi K2.5, which runs multiple AI agents at once.

The broader significance of this work ties into rapid shifts across the industry. AI-adjacent roles most exposed to language models grew 93% since the launch of ChatGPT, reflecting the expanding real-world demand for more capable and reliable LLM infrastructure. Attention Residuals represent exactly the kind of architectural improvement that makes such scaling sustainable.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi