⬤ Researchers from Renmin University, Alibaba's Tongyi Lab, and OpenRLHF have introduced IterResearch, a framework built to fix a persistent problem: AI agents losing focus during extended, multi-step tasks. Instead of letting the context window balloon indefinitely, the system applies a dynamic reporting mechanism that continuously summarizes interim findings and keeps only the most critical information in play. Memory design is becoming a key focus in agent research, as explored in AI agent memory 4-layer infrastructure now drives next-gen systems.

⬤ Traditional long-horizon agents tend to accumulate sprawling context histories that gradually degrade their accuracy. IterResearch replaces that with a living "report" structure, selectively storing condensed insights from prior steps. The result is a system that stays coherent through long workflows without drowning in its own history. Similar gains from structured reasoning are documented in AI tool verification boosts Qwen and Llama accuracy by 31.6% on hard math benchmarks.

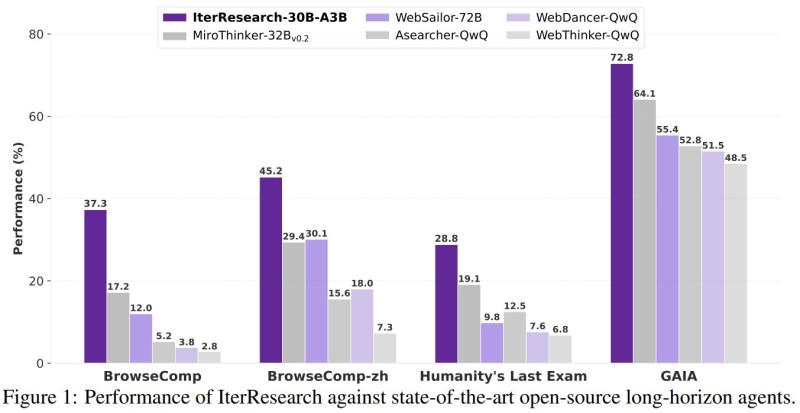

⬤ Benchmark numbers back up the claims. IterResearch-30B-A3B hit 37.3% on BrowseComp, 45.2% on BrowseComp-zh, and 28.8% on Humanity's Last Exam, beating competitors like WebSailor-72B and MiroThinker-32B across the board. On GAIA, it posted 72.8% against MiroThinker-32B's 64.1%. Across six benchmarks, the system averaged a 14.5 percentage-point gain over existing open-source agents.

⬤ The framework scales up to 2,048 interactions, one of the longest reported horizons for any AI research agent. These results reflect a broader industry shift toward structured agent architectures built for long-term reasoning, a trend also tracked in Google launches 24/7 AI memory agent, Bloomberg reports shift in agent architecture.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi