AI agent memory is no longer just about storing data. Developers are rethinking how large language models manage information across sessions - and the architecture behind it is far deeper than most realize.

The Memory Iceberg Most Developers Miss

What many AI systems label as "memory" today is, in practice, little more than chat logs, embeddings, or extended context windows. Real memory, as increasingly discussed across the developer community, should function as a lifecycle system - one that decides what to keep, compress, forget, or govern over time.

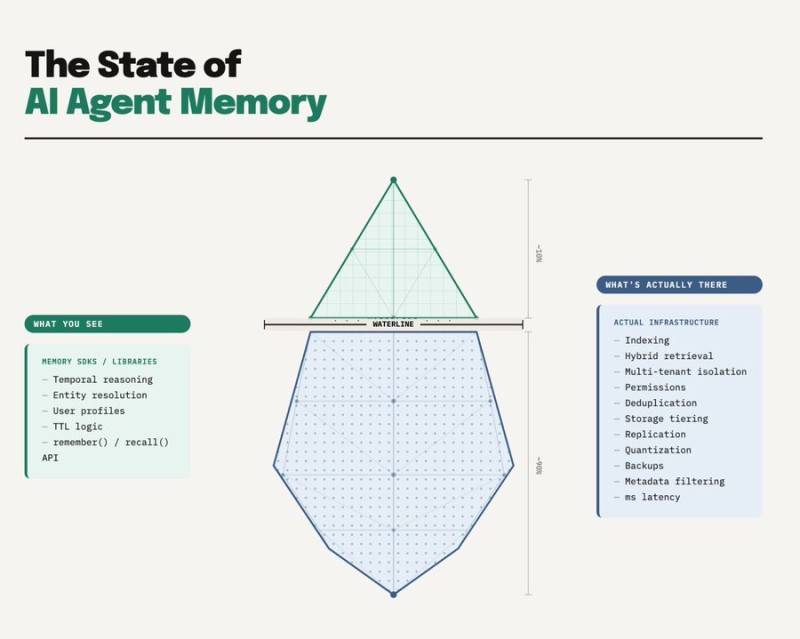

The so-called "AI agent memory iceberg" makes this gap visible. On the surface sit familiar tools: memory SDKs, user profiles, and simple functions like remember or recall. Beneath that layer lies a far larger stack covering indexing, hybrid retrieval, metadata filtering, replication, storage tiering, and latency optimization. Storing large volumes of data and actively maintaining usable memory are two very different problems.

Short-Term vs. Long-Term: How Major AI Architectures Handle It

In AI systems deployed by major technology companies - including those in the broader ecosystem around - memory is typically split into two layers. Short-term memory covers the active context window: recent conversation turns, retrieved documents, tool outputs. Long-term memory refers to persistent state that survives across sessions, enabling personalization and workflow continuity.

The next evolution involves shifting from passive storage toward active memory curation. AI Memory Evolution: 10x Efficiency Gains as RAG Systems Become Obsolete examines how smarter memory architectures could dramatically boost efficiency in agent systems - signaling that retrieval-augmented generation as we know it may already be approaching its limits.

As AI agents move deeper into real-world workflows, memory layers will need to offer the same operational guarantees as database systems: consistency, reliability, and governance. These requirements are increasingly tied to safety standards across the industry, including frameworks discussed in OpenAI and Anthropic Release Multilayer Safety Framework for Enterprise AI Agents, which highlights how enterprise-grade AI is evolving toward structured infrastructure and governance models.

Memory is becoming a core infrastructure layer - not an add-on feature. The AI systems that get this right will define what autonomous agents can actually do.

Usman Salis

Usman Salis

Usman Salis

Usman Salis