Researchers from Stanford University and the University of Munich have built a new learning framework that could meaningfully change how large language models are trained. The study, titled Tool Verification for Test-Time Reinforcement Learning, tackles one of the core weaknesses in modern AI systems: models that confidently reinforce wrong answers simply because they repeat them often enough.

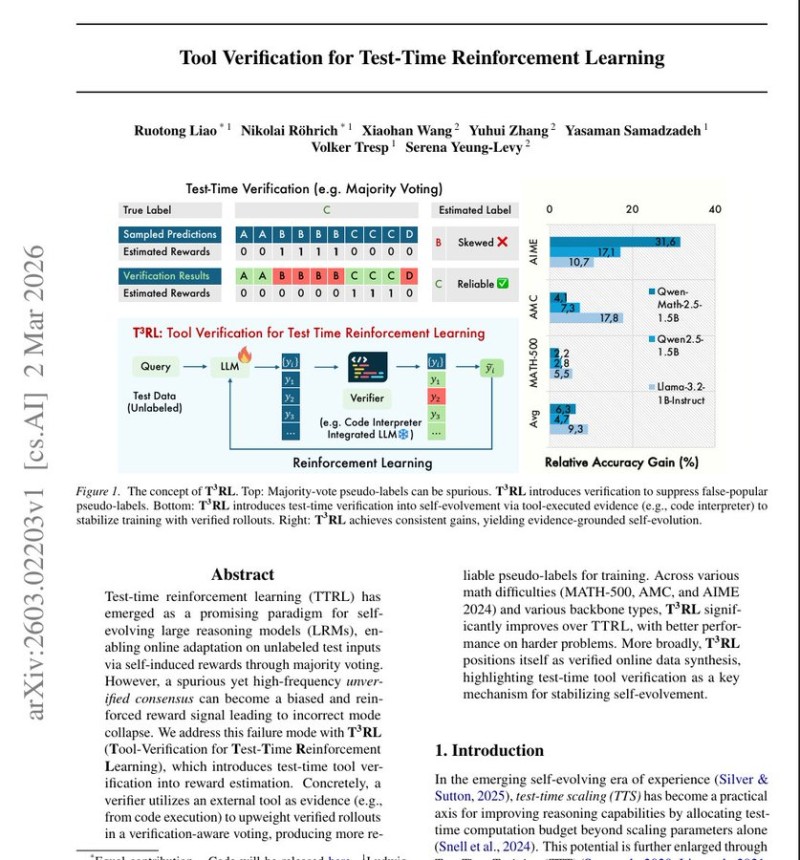

The problem stems from "majority voting," a widely used technique where a model generates multiple answers and defaults to whichever comes up most frequently. That works when the model is mostly right - but when it has a consistent blind spot, repetition only deepens the error. The new framework adds a verification layer: a secondary AI system writes a small program to test the logic behind each response before that response gets reinforced during training. If the code confirms the reasoning holds, that answer earns stronger reinforcement. If not, it gets filtered out.

31.6% Accuracy Gains on AIME, AMC, and MATH-500

The team tested the approach on Alibaba's Qwen and Meta's Llama reasoning models, running experiments across three challenging math benchmarks: AIME, AMC, and MATH-500. Qwen, released by Alibaba Cloud in 2023, is built specifically for reasoning, coding, and multilingual tasks. Results showed relative accuracy gains of up to 31.6% on the hardest problems - a gap wide enough to matter in production environments where precision is non-negotiable.

Verification-Based Learning Is Becoming a Standard Technique

The findings fit into a broader shift happening across AI research. Reasoning-focused architectures are consistently outperforming traditional language models on complex multi-step tasks, especially in math and formal logic. Related work includes Qwen's Siamesenorm 13B model, which demonstrated significant training improvements through architectural changes. Together, these developments suggest that tool-assisted verification and structured reinforcement are becoming the baseline, not the exception, for next-generation AI reliability.

Peter Smith

Peter Smith

Peter Smith

Peter Smith