Multi-agent AI systems have long been pitched as a path to more reliable and capable AI. The idea is intuitive: put more models to work on a problem together, and they should outperform a single agent. But a new research paper titled "Can AI Agents Agree?", highlighted by AI researcher Rohan Paul, throws cold water on that assumption, revealing that large language model agents consistently struggle to reach consensus, even in the simplest cooperative tasks.

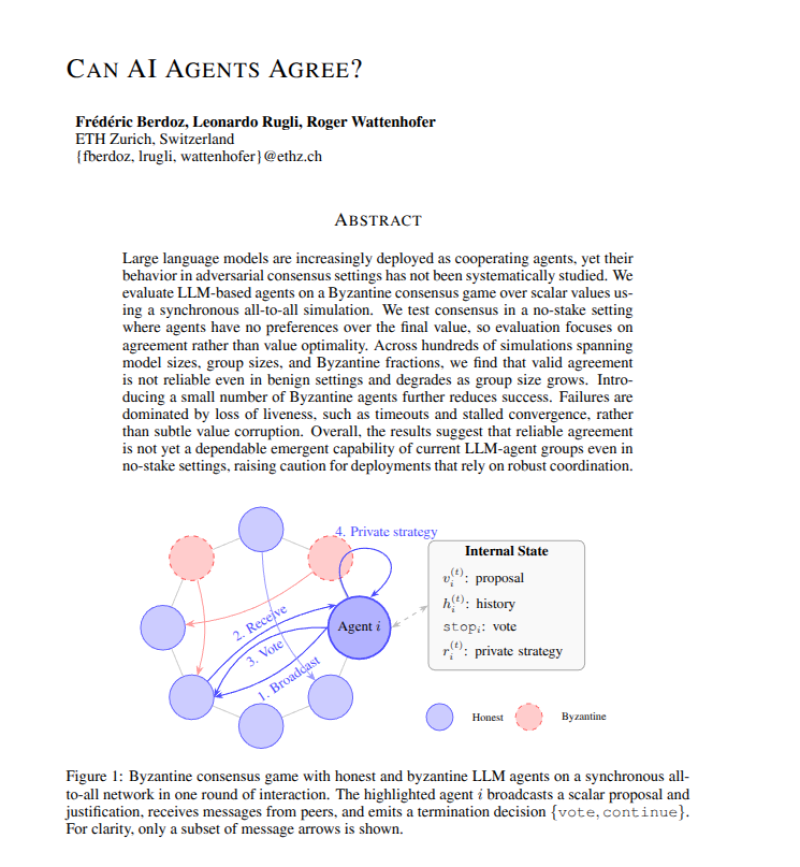

The study uses a Byzantine consensus-style framework to evaluate how groups of LLM agents coordinate decisions. The results are stark. Agreement frequently breaks down even when all agents are cooperative and pursuing the same goal. More troubling, adding more agents makes things worse, not better, with larger groups producing more instability and more frequent coordination failures.

Why 47% Token Cuts and 5-Point Accuracy Gains Matter Less Without Coordination

The findings land at an awkward moment for the AI sector. Companies like Nvidia and OpenAI have invested heavily in multi-agent architectures.

Meanwhile, adjacent research has focused on improving individual agent quality, such as ACT, which trains AI agents to judge their own decisions, reporting 5-point accuracy gains, and Rebalance, which cuts LLM reasoning tokens by 47% without retraining.

Increasing the number of agents leads to worse performance, with systems becoming unstable or stopping entirely.

These are real improvements, but they address single-agent reliability rather than the group-level problem this paper exposes.

Sentient's 4-Component ROMA Framework Faces the Same Structural Problem

The timing is also notable for frameworks explicitly designed around agent collaboration. Sentient recently launched ROMA, an open-source multi-agent framework built on a 4-component system aimed at scaling agent collaboration. The research suggests that scaling the number of agents without solving the consensus problem may simply amplify existing failure modes rather than unlock new capabilities.

At the core of the issue is a structural limitation in how current LLMs handle group coordination. Communication stalls, agents fail to converge, and the system has no reliable mechanism to break deadlocks. Until those foundations are addressed, multi-agent AI may remain more fragile than its proponents acknowledge, particularly in real-world tasks that demand reliability and deterministic outcomes.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi