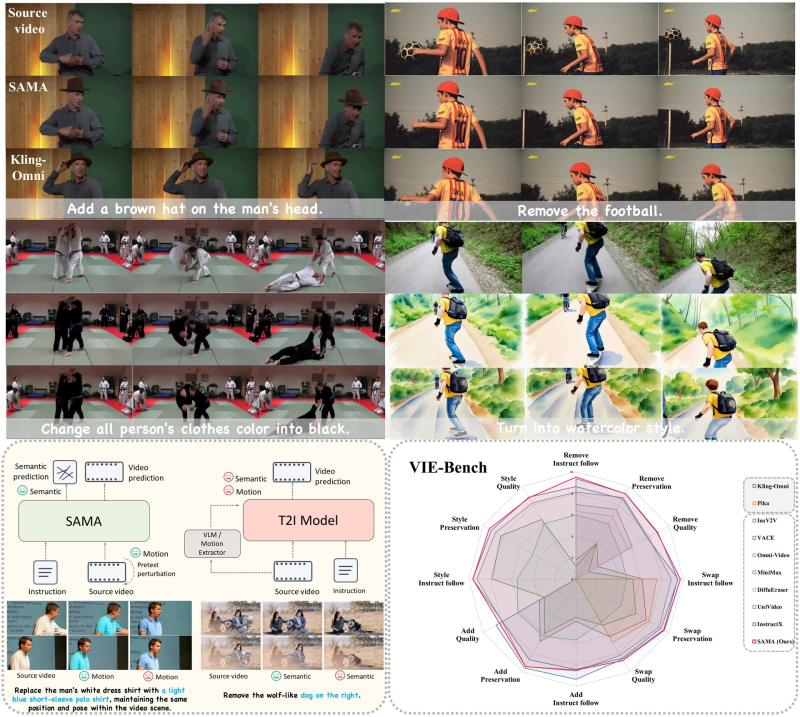

Baidu has quietly made a significant move in the open-source AI space. The company released SAMA on Hugging Face, an instruction-guided video editing model that lets users modify video content using plain natural language. It combines semantic understanding with motion consistency, and its results are turning heads across the research community.

How SAMA's Architecture Separates Semantics from Motion

What makes SAMA different from older video editing pipelines is its factorized design. The model splits the editing process into two distinct stages: semantic anchoring and motion alignment. Semantic anchoring handles how instructions map to visual changes, applying edits consistently across a scene.

Motion alignment then keeps those edits stable frame by frame, preventing the flickering or drift that plagued earlier approaches. This is why multi-agent AI systems with specialized roles are outperforming single models across the board, and SAMA follows the same architectural logic. Its unified learning pipeline handles structure and motion together, giving it an edge in both visual accuracy and temporal coherence.

VIE-Bench Results Show SAMA Competitive with Commercial Models

On the VIE-Bench evaluation framework, SAMA scores at the top of the open-source category across instruction following, style preservation, and visual quality metrics. More striking is how close it gets to commercial systems like Kling-Omni, a proprietary model with significantly more resources behind it. The gap is narrowing fast, and much of that is down to how efficiently open-source teams are navigating the growing AI energy and compute demands that are crashing into Earth's 100-energy wall.

SAMA's release fits into a wider wave of instruction-guided multimodal models pushing perception and generation closer together. As video AI scales, it draws on the same infrastructure momentum behind projects like OpenAI's $500B Stargate project driving the US AI data center boom. For now, SAMA stands as one of the clearest examples of open-source closing the gap with commercial-grade video editing, and it's ready for developers to test today.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi