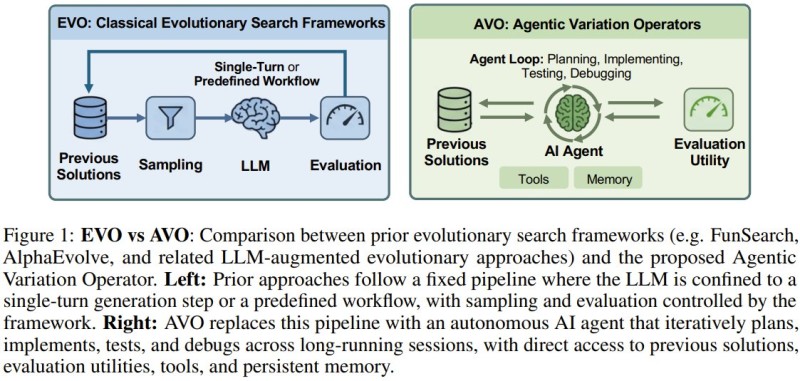

⬤ NVIDIA has introduced Agentic Variation Operators (AVO), an AI-driven framework that lets autonomous agents iteratively optimize code without fixed workflows. The system creates adaptive loops where AI can plan, test, debug, and refine implementations over time - a direct contrast to traditional single-pass evolutionary pipelines shown in the accompanying diagram.

⬤ The structural difference is clear: legacy frameworks rely on predefined sequences where LLMs operate in a single turn, while AVO introduces a persistent agent loop with memory, tools, and continuous feedback. Agents dynamically propose, repair, critique, and verify code changes - reflecting NVIDIA's broader push toward full-stack AI infrastructure where software increasingly complements its hardware dominance.

⬤ Those numbers matter in high-performance AI workloads. Even incremental efficiency gains reduce computational cost and latency at scale. The results also extend to grouped-query attention, suggesting broader applicability across modern transformer architectures.

⬤ The timing is notable. With hyperscaler AI spending approaching $500B, the pressure to extract maximum performance from existing hardware is only growing. AVO positions NVIDIA at the optimization layer above silicon - where self-improving agents reduce the need for manual kernel engineering.

⬤ Google's Gemini 3.1 Pro powering 1-million-token workflows shows a parallel trend: agentic, long-context AI systems are becoming the new baseline across the industry, not the exception.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi