⬤ A new research paper, Memento-Skills: Let Agents Design Agents, introduces a generalist AI system that autonomously builds and improves task-specific agents through experience. Developed by the Memento-Team and reported by DailyPapers, the framework enables continual learning without updating large language model parameters - a meaningful shift toward more adaptive AI architectures.

⬤ The system runs on a memory-based reinforcement learning framework where reusable skills are stored as structured knowledge and evolve across interactions. A read-write learning cycle selects relevant skills and updates them from new experience - no core model retraining needed. This mirrors what OpenClawRL trains AI agents from live conversations has explored: agents improving through live conversations rather than static datasets, with real-time feedback loops driving measurable gains.

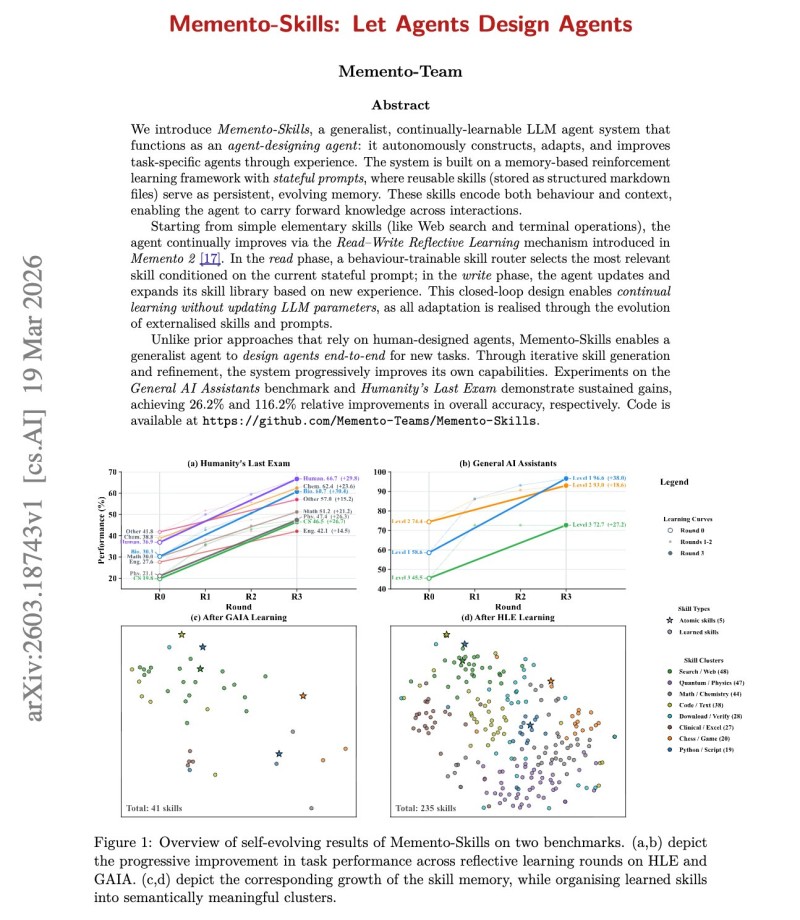

⬤ Benchmark results are hard to ignore: a 26.2% relative improvement on GAIA and a 116.2% jump on Humanity's Last Exam. Skill clusters expanded measurably over time, confirming that iterative learning compounds. These numbers echo themes from AI progress accelerates as models now complete multi-hour complex tasks in record time on models completing complex multi-hour tasks in record time, and align with the agent world model points to the next AI architecture shift - where structured memory and iteration are becoming the standard rather than the exception.

⬤ Memento-Skills signals a broader move toward self-evolving AI that learns through experience rather than periodic retraining. Persistent memory, modular skills, and adaptive loops could redefine how AI agents operate in environments requiring long-term flexibility and continuous performance growth.

Victoria Bazir

Victoria Bazir

Victoria Bazir

Victoria Bazir