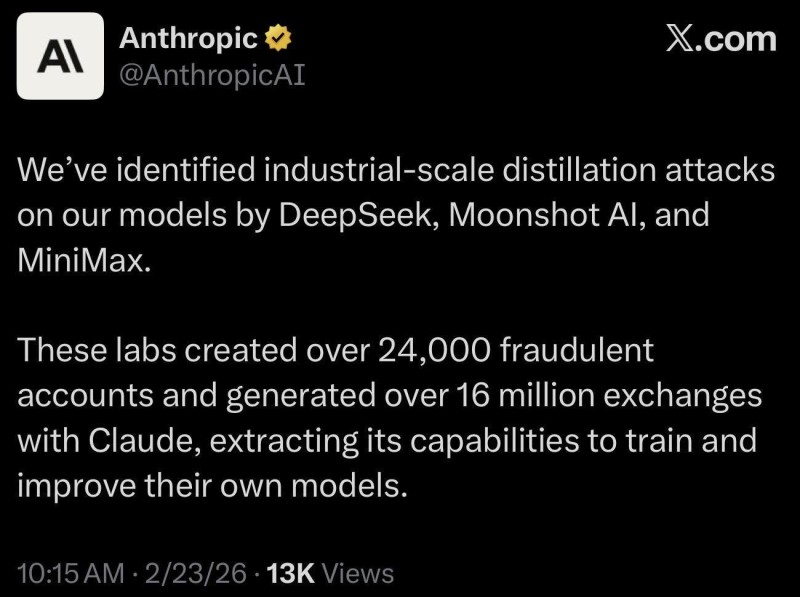

⬤ Anthropic has gone public with a serious accusation against three rival AI labs — DeepSeek, Moonshot AI, and MiniMax. The company claims these firms generated more than 16 million prompts through over 24,000 fraudulent accounts, using Claude's responses to train and sharpen their own models. Anthropic isn't just treating this as an IP dispute — it's also framing it as a potential national security issue, given that extracted AI capabilities could end up feeding into foreign military or surveillance systems.

⬤ The breakdown is striking: MiniMax allegedly accounted for around 13 million interactions, Moonshot AI for roughly 3.4 million, and DeepSeek for about 150,000 prompts. Anthropic calls this "industrial-scale distillation" — a process where one model's outputs are used to train another. Distillation itself isn't new in machine learning, but the argument here is about scale and deception: fake accounts, repeated queries, and a clear intent to sidestep normal usage terms. It's worth noting that DeepSeek has been reporting high performance with 225k tokens per second on GB300 GPUs — making the question of how those gains were achieved all the more pointed.

⬤ The timing adds another layer. This is all unfolding as Anthropic could surpass OpenAI in annualized revenue by mid-2026, which tells you just how competitive things have gotten. At the same time, the accused labs aren't standing still — Moonshot AI's Kimi K25 can now run multiple AI agents simultaneously, a capability that raises its own questions about where these advances are coming from.

⬤ What this case really puts on the table is the question of where competitive AI development ends and IP theft begins. As large language models become core commercial assets, the rules around model outputs, unauthorized access, and cross-border competition are going to need serious work. Anthropic's move could push the broader industry toward clearer standards and more scrutiny around how AI models are accessed and reused.

Victoria Bazir

Victoria Bazir

Victoria Bazir

Victoria Bazir