⬤ DeepSeek has released a new research paper, "DualPath," focused on improving inference efficiency through changes to KV-cache loading and scheduling. The central claim is that KV-cache loading "does not have to be prefill-centric" - positioning DualPath as infrastructure-level optimization built for agent and reinforcement-learning style serving. The paper compares multiple variants, including "Ours," "Ours (basic)," and "Ours (oracle)," against standard baselines.

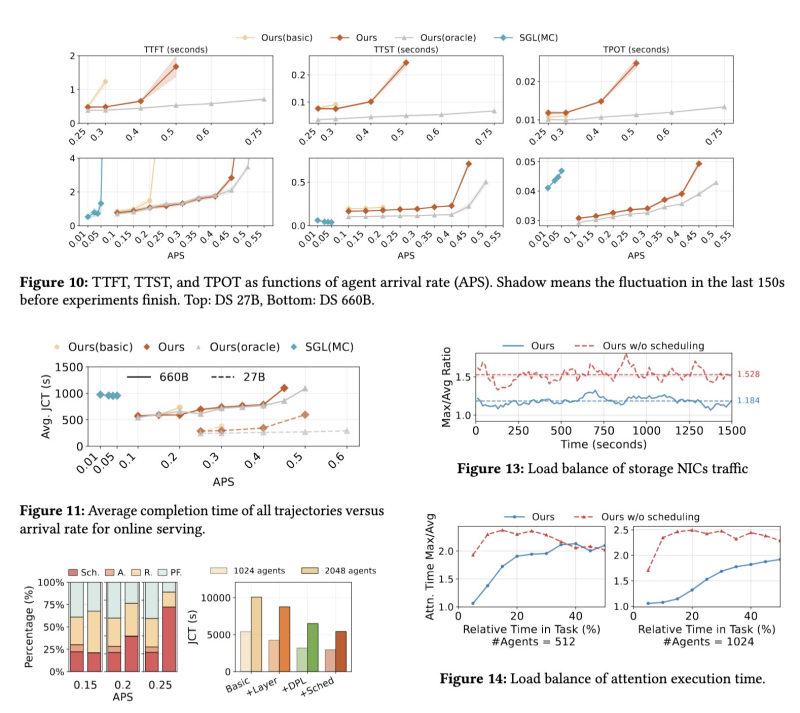

⬤ The evaluation tracks TTFT, TTST, and TPOT latency metrics against agent arrival rate (APS) for two model sizes: DS 27B and DS 660B. Across both, the "Ours" line trends lower than alternatives as load increases. A separate chart tracking average completion time of all trajectories versus arrival rate shows DualPath holding steady while competing methods degrade more sharply at higher APS.

⬤ Load balancing results reinforce the scheduling story. In storage NIC traffic charts, the scheduled configuration holds a stable max-to-average ratio over time, while the unscheduled variant runs higher and more volatile. Attention execution time plots for 512 and 1024 agents show the same pattern - scheduled wins on both level and consistency. These visuals support the paper's reported gains of up to 1.87x offline throughput and 1.96x higher agent runs per second online.

⬤ DualPath matters because it frames inference performance as an infrastructure and scheduling problem, not just a model-architecture question. If these numbers hold across broader deployments, KV-cache loading changes alone could shift how high-concurrency agent systems are served - especially at arrival rates where latency variability tends to worsen fast. For more DeepSeek context, see how DeepSeek V3 used MTP for training only, not to speed up inference, and the recent DeepSeek launch of DeepSeekOCR-2 for advanced visual and text reasoning.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi