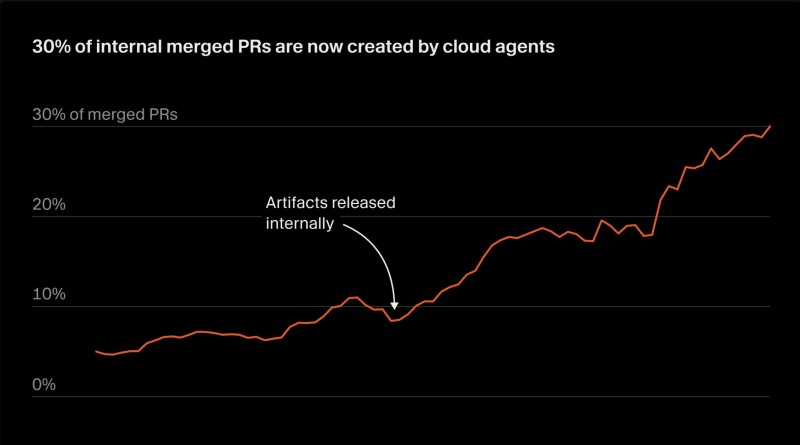

Autonomous AI agents are no longer a side experiment at Cursor. The company has confirmed that cloud agents now account for around 30% of all merged pull requests internally, and the share keeps growing. It's one of the clearest signals yet that AI-driven development has moved from a promising idea into an actual engineering workflow.

What makes this notable isn't just the number — it's how these agents operate. Each one runs inside its own virtual machine with a full development environment: browsers, desktop apps, and every tool a human developer would use. They can take a task from zero, build the feature, run tests, record validation videos, and submit a production-ready PR without anyone touching the keyboard.

Cloud Agents Cover the Full Software Lifecycle Across 5 Platforms

The scope of what these agents handle is broader than typical automation. Cursor uses them for new feature development, UI workflow validation, security vulnerability reproduction, and quick end-to-end bug fixes. They operate across web, mobile, desktop, Slack, and GitHub — covering the entire software lifecycle rather than just isolated tasks. As AI systems move from prediction to action, this kind of multi-platform autonomy is becoming the new standard.

The internal data Cursor shared shows a clear upward trend in agent-generated merged changes over time. There's a visible dip right after certain internal releases, but the overall curve keeps climbing. Handing nearly a third of all merged code to autonomous agents isn't a demo — it's a meaningful operational shift for a team shipping real products.

30% of Merged PRs Is a Signal, Not a Ceiling

The trajectory suggests this number will keep rising. Cursor recently cut agent token usage by 47% with a smarter context system, making these workflows faster and cheaper to run at scale. And Anthropic's data shows 40% productivity gains on complex tasks, reinforcing the efficiency case for AI-driven development.

The broader implication is about how engineering teams are structured. As agents take on more execution, the role of human developers shifts toward direction-setting and output evaluation. That changes how work gets reviewed, how quality is maintained, and ultimately how teams think about what it means to write software together.

Victoria Bazir

Victoria Bazir

Victoria Bazir

Victoria Bazir