⬤ Researchers from CUHK MMLab, the University of Science and Technology of China, Oxford University, and several partner institutions have introduced VDR-Bench, a new benchmark designed to evaluate how effectively multimodal AI systems search and reason across images and text. The framework, called Vision-DeepResearch Benchmark, was built to address real weaknesses in current visual reasoning tests. The team found that many existing benchmarks allow models to answer questions using textual hints or prior knowledge rather than actually analyzing image content, making their results misleading.

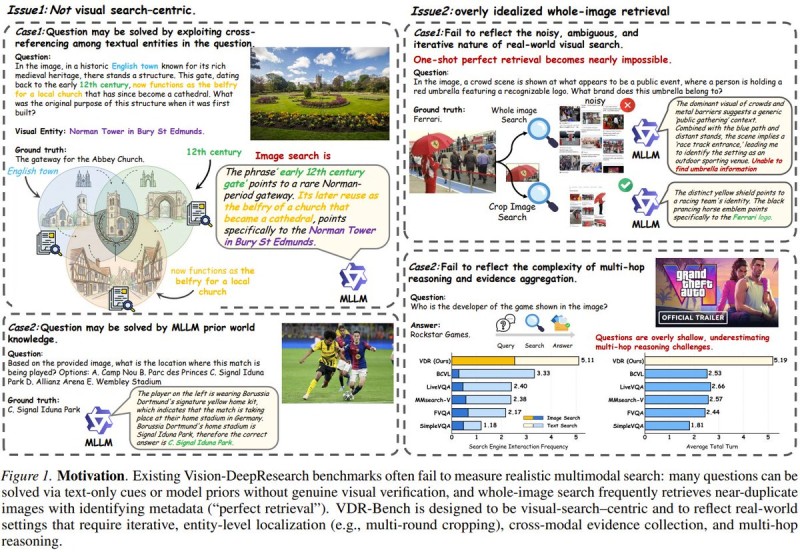

⬤ The study highlights structural flaws in current multimodal evaluation methods. Many benchmarks are not visual search-centric and fail to reflect real-world scenarios where images contain ambiguous or noisy details. Models can often infer correct answers from textual context alone without any meaningful visual verification. To fix this, VDR-Bench introduces tasks that require iterative visual retrieval, forcing models to zoom into specific image regions and analyze particular visual entities. This approach connects to new paradigm for multimodal model messaging using Vision Wormhole architecture, which rethinks how multimodal systems exchange visual information.

⬤ A key innovation in VDR-Bench is its multi-round search strategy. Instead of a single full-image retrieval pass, the system progressively crops and analyzes smaller image regions to uncover details that would otherwise be missed. This multi-step approach significantly improves accuracy on complex visual reasoning tasks requiring multiple pieces of evidence. The method aligns with broader infrastructure advances, including research on AI memory evolution delivering 10x efficiency gains beyond RAG systems, which could further strengthen multimodal reasoning pipelines.

⬤ The research also stresses that realistic visual search often involves multi-hop reasoning and evidence aggregation across both images and text. Identifying a brand logo or determining a video game developer from a screenshot may require combining visual cues with contextual knowledge. Current benchmarks routinely underestimate this complexity. By simulating these scenarios, VDR-Bench aims to better measure what multimodal AI can actually do. These findings emerge as competition among AI developers heats up, with ongoing efforts toward analysis of OpenAI and Anthropic models competing in the AI efficiency race with major token reduction.

⬤ VDR-Bench reflects a broader push within the AI research community to build evaluation frameworks that genuinely mirror real-world multimodal tasks. By requiring deeper visual search and multi-step analysis, the benchmark closes the gap between theoretical model performance and practical AI capabilities.

Victoria Bazir

Victoria Bazir

Victoria Bazir

Victoria Bazir