AI systems are getting smarter - not by retraining from scratch, but by learning from what they have already done. Trace2Skill is a new framework that turns past execution traces into reusable skills, helping models improve performance without touching a single parameter.

What Trace2Skill does and why it matters

A new framework called Trace2Skill has been introduced to improve AI systems through structured skill extraction.

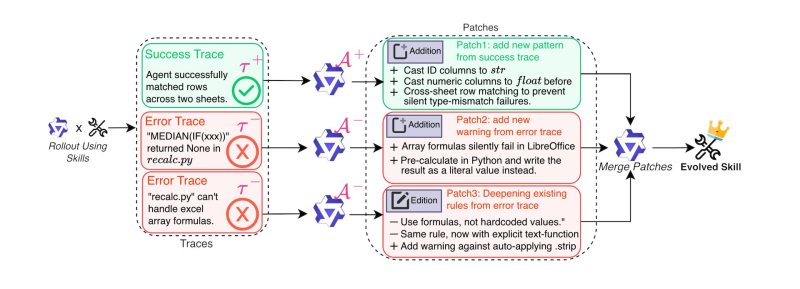

As reported by DailyPapers, the approach dispatches parallel sub-agents to analyze execution trajectories and distills them into transferable declarative skills that can be reused across tasks - without touching model parameters at all.

The framework emphasizes transforming observed behaviors into structured knowledge, allowing models to improve performance without requiring parameter updates.

The system works by examining execution traces generated during task performance. These traces are processed by sub-agents that identify patterns and convert them into reusable skills. The result is a more efficient learning loop: instead of retraining, the model simply draws on a growing library of structured behaviors.

Trace2Skill's core mechanism: from traces to transferable skills

The key innovation lies in distilling execution trajectories into declarative representations that travel across tasks. This kind of parallel analysis is gaining traction across the AI space - a pattern reflected in developments like the TrustSQL breakthrough that signals a shift in AI data systems. Once captured, these skills become reusable assets - meaning the model improves its outcomes through skill reuse rather than additional training rounds.

By leveraging parallel analysis, Trace2Skill enables efficient extraction of useful behaviors from past executions, allowing AI systems to improve outcomes through skill reuse rather than retraining.

This represents a meaningful shift in how agent-based systems accumulate capability. Rather than encoding knowledge in weights, it lives in a structured, inspectable skill library that can be queried, updated, and shared across model instances.

Key properties of the framework include:

- Parallel sub-agent dispatch for trace analysis

- Declarative skill representation derived from execution trajectories

- Cross-task skill transfer without parameter modification

- Compatibility with existing agent-based architectures

Trace2Skill fits a broader AI efficiency trend in 2025

The introduction of Trace2Skill reflects a growing focus on doing more with less as computational costs continue to climb. This aligns with a wider push toward agent architectures that accumulate competence through experience - visible in results like AI agents beating cuDNN by 35% in NVIDIA's AVO framework. Frameworks that enhance performance without additional training carry real practical weight as the industry scales.

Approaches that enhance performance without additional training may influence how future AI systems are designed and deployed as demand for scalable AI infrastructure continues to grow.

The economics of retraining large models are increasingly difficult to justify for incremental gains. With AI data center demand projected to hit 219 GW by 2030, the case for efficiency-first approaches like Trace2Skill becomes harder to ignore. Treat past execution as curriculum, and let skills do the compounding.

Victoria Bazir

Victoria Bazir

Victoria Bazir

Victoria Bazir