Researchers from Stanford University and Tsinghua University have introduced VLAW, a new training framework that significantly improves how robots learn complex manipulation tasks. By combining real-world robotic data with AI-generated simulations in a continuous feedback loop, the system achieved a 39.2% absolute improvement in task success rates over baseline models. The broader context for this work lies in the rapid rise of 8 Vision-Language-Action models shaping embodied AI research, architectures that enable robots to interpret visual inputs and natural language instructions before executing real-world actions.

How VLAW Closes the Simulation-to-Reality Gap

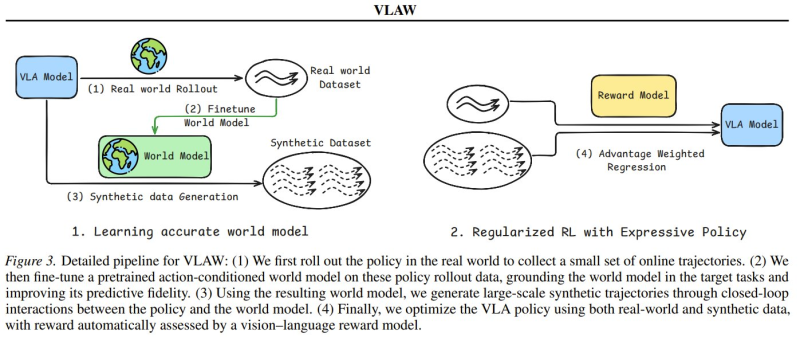

The VLAW pipeline starts with a small batch of real-world robot trajectory data collected via a VLA policy. That data fine-tunes a predictive world model, training the simulator to capture subtle physical interactions that conventional environments consistently miss.

Once refined, the world model generates large-scale synthetic trajectories, letting robots rehearse tasks in simulation before transferring skills to the real world. This directly tackles a long-standing problem in robotics: models trained purely in simulation tend to fall apart when exposed to real-world physics.

Advantage-Weighted Regression Drives Iterative Improvement

During training, a vision-language reward model evaluates every generated trajectory, and optimization is carried out through advantage-weighted regression. This allows the robot policy to improve across iterations while keeping expensive real-world testing to a minimum. The idea of fusing world models with AI agents connects to a wider architectural shift, examined in detail in Agent world model points to next AI architecture shift.

Progress in simulation-driven robotics is running parallel to broader changes across AI development. Non-technical users are now building software products with AI assistance at scale, a trend documented in coverage of how AI vibe coding generates 2M-3M businesses for noncoders. Together, these developments point to AI steadily closing the gap between controlled experimentation and real-world deployment, across both physical and digital domains.

Usman Salis

Usman Salis

Usman Salis

Usman Salis