AllenAI has introduced MolmoBot, a vision-language-action model built for mobile manipulation tasks, now publicly available on Hugging Face. According to JIQIZHIXIN, the system was trained entirely in simulation and achieves zero-shot transfer to real-world environments without using a single frame of real robot data. This positions MolmoBot as part of a broader push toward more scalable AI training methods.

How MolmoBot's Simulation Pipeline Bridges the 79.2% Gap to Reality

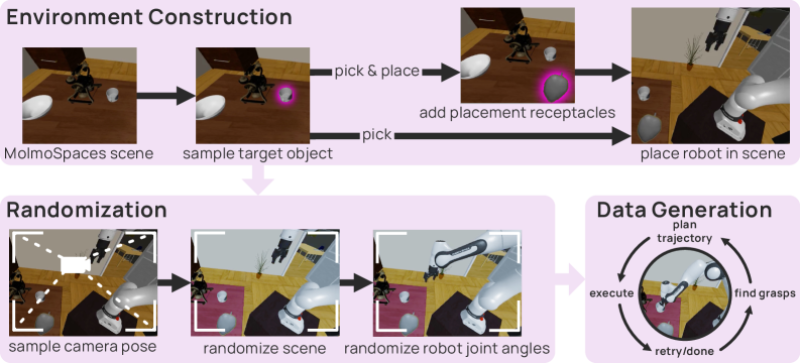

The architecture behind MolmoBot relies on three core components working in tandem: environment construction, domain randomization, and automated data generation pipelines that simulate diverse real-world conditions.

The framework uses randomized camera positions, object placements, and robot joint configurations to force the model to generalize rather than memorize.

Randomizing camera positions, object placements, and joint configurations during simulation forces generalization in a way that curated datasets simply cannot replicate.

By building robust manipulation strategies purely from synthetic environments, the system avoids the high cost of real-world data collection - an approach that aligns with recent infrastructure advances like PyTorch 2.1.1 bringing major AI training upgrades.

MolmoBot Outperforms Competing Robotics Models on DROID Benchmarks

In real-world evaluations, MolmoBot posted a 79.2% success rate on DROID benchmarks, a significant lead over competing models including pi-zero-point-five, which scored substantially lower under comparable conditions. The system handled tasks like pick-and-place operations and articulated object manipulation without any additional fine-tuning on real data, confirming that large-scale simulation can meaningfully close the sim-to-real gap. Results like these represent a genuine step forward compared to traditional robotics pipelines that lean heavily on real-world data collection.

Key capabilities demonstrated in the evaluation include:

- Zero-shot transfer from simulation to physical environments

- Pick-and-place task execution without real-world training

- Articulated object manipulation across varied configurations

- Strong generalization under randomized scene conditions

This kind of efficiency-driven progress is also visible in adjacent research, such as new frameworks boosting LLM accuracy by 70% while cutting token use by 39%, pointing to a wider trend of doing more with less across AI development.

A 79.2% zero-shot success rate on DROID isn't just a number - it's a proof of concept that simulation-only training can meet real-world robotics standards.

MolmoBot and the Shift Toward Simulation-Driven Robotics Deployment

MolmoBot's results point to a larger transition underway in the field. By removing the dependency on real-world data, simulation-first approaches could dramatically accelerate both research timelines and practical deployment across a range of robotics applications. The momentum behind this shift is visible across the industry, from academic labs to commercial players pushing humanoid robots into everyday settings. Tesla's Optimus push into daily life is one clear signal of how training efficiency gains are feeding directly into real-world autonomous systems. If zero-shot transfer continues to improve, it may fundamentally change how robots are built, tested, and deployed at scale.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi