Multimodal AI has reached a point where simply measuring what a model can classify is no longer enough. As systems grow more capable of combining language, vision, and structured data, the research community needs tools that measure how deeply these models actually reason - not just what they recognize. That gap is exactly what Microsoft Research Asia's new UniG2U-Bench is built to close.

UniG2U-Bench Covers Geometry, Spatial Logic, and Chart Analysis

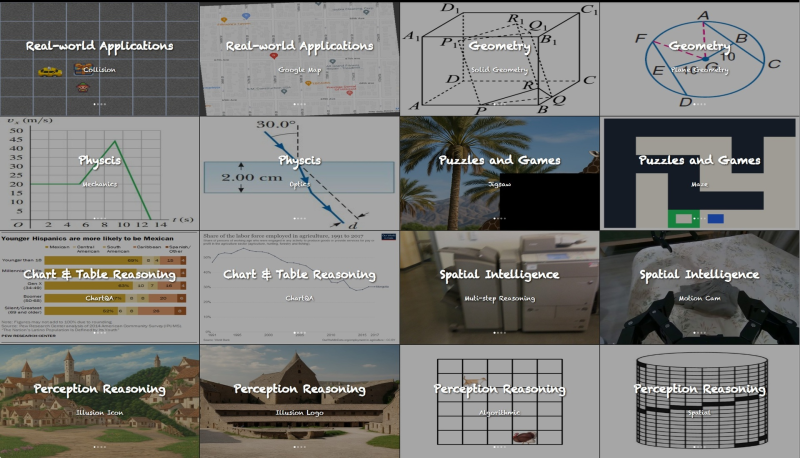

The benchmark evaluates unified multimodal models across seven reasoning regimes and 30 subtasks. These include geometry reasoning, physics interpretation, puzzle and maze navigation, spatial intelligence, perception reasoning, and chart or table analysis. Visual examples throughout the benchmark require models to interpret diagrams, maps, graphs, and real-world images - reflecting the expanding scope of what modern multimodal evaluation needs to cover. The work connects naturally to Microsoft Research's broader push into advanced reasoning systems, including the TARS method for speech AI.

Why Generation-Driven Understanding Matters for Unified AI Models

The core question UniG2U-Bench asks is whether generation actually improves understanding in multimodal architectures. Rather than scoring a model on whether it picks the right answer, the benchmark measures how a model constructs a response when working through something genuinely complex - a structured dataset, a layered diagram, or a visual logic puzzle. This reflects a broader shift in AI evaluation toward measuring reasoning depth rather than surface accuracy. It also arrives alongside growing scrutiny of model limitations, including MIT research showing LLMs perform up to 10x worse when processing their own prior outputs.

The timing matters. As the field pushes toward more capable unified systems, standardized benchmarks are becoming essential infrastructure. UniG2U-Bench joins a growing set of rigorous evaluation tools - including SWE-1.6, which recently scored 51.7 on SWE-bench Pro and outperformed its predecessor - that help researchers track genuine progress rather than benchmark-specific optimization. For labs building the next generation of multimodal models, that kind of honest measurement is increasingly hard to ignore.

Peter Smith

Peter Smith

Peter Smith

Peter Smith