Microsoft (MSFT) researchers have flagged a major reliability gap in today's AI systems. A new paper from Microsoft Research and Salesforce Research looked at how large language models actually behave when people talk to them over multiple turns, rather than in the tightly controlled single-prompt setups that dominate most benchmarks.

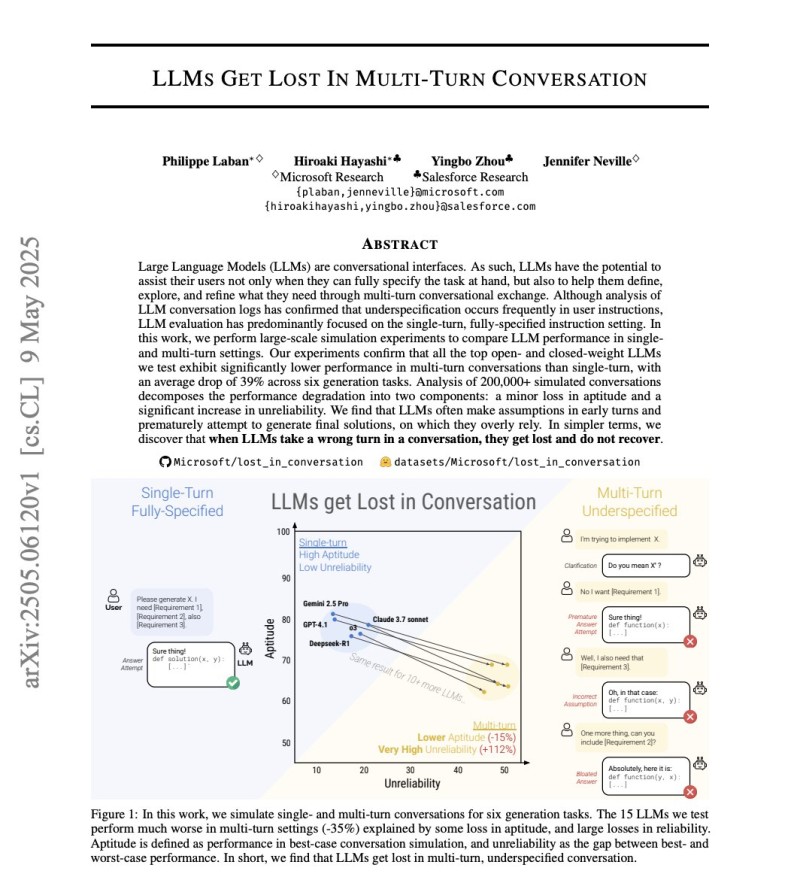

The study evaluated 15 major models, including GPT-4.1, Gemini 2.5 Pro, Claude 3.7 Sonnet, and DeepSeek R1, across more than 200,000 simulated conversations. The gap between benchmark performance and real conversational performance turned out to be much wider than the industry tends to assume.

A 39% Performance Drop When Conversations Get Longer

In single-prompt tasks, the tested models performed strongly. But when the same tasks unfolded across multiple dialogue turns, average performance dropped by around 39%. That is not a small margin when you consider how often these models are deployed in customer support tools, coding assistants, and enterprise agents where back-and-forth dialogue is the norm.

The researchers found that model aptitude declined by roughly 15% in multi-turn settings, but the bigger story was reliability: unreliability increased by approximately 112% during extended dialogues. Longer responses made the problem worse. Each additional assumption a model makes raises the chance of a cascading error, where one wrong step compounds into several bad ones by the end of the exchange.

Why This Matters for Enterprise AI Adoption

As AI tools get woven into enterprise workflows, the ability to hold coherent, accurate conversations over time matters more than nailing isolated prompts. The Microsoft study lands at a moment when the industry is pushing hard on agentic AI systems that are expected to complete complex multi-step tasks autonomously. A 39% performance drop in real dialogue conditions is a meaningful signal that current architectures still have ground to cover. Research across the AI ecosystem continues to explore new ways to close this gap, from improved reasoning frameworks to better memory management inside long conversations.

Saad Ullah

Saad Ullah

Saad Ullah

Saad Ullah