Multimodal AI models have a reliability problem: they regularly produce outputs that contradict the very images they're processing. A new decoding framework called LEAD takes direct aim at this issue without requiring any additional model training - making it a practical and immediately deployable solution.

How LEAD Dynamically Switches Between Reasoning Modes

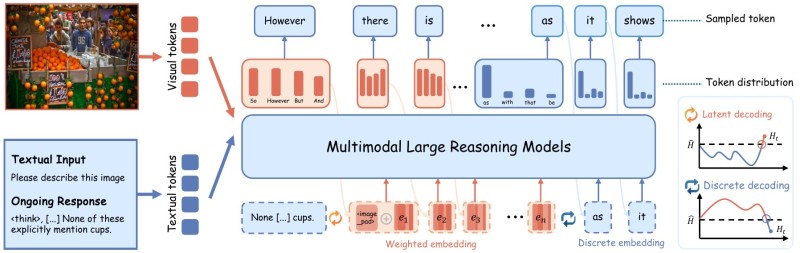

LEAD works at the decoding stage, where it monitors confidence levels in real time. When the model enters a high-entropy state - meaning it's uncertain about the next output - the system activates latent reasoning to preserve flexibility. When confidence is higher, it switches to standard discrete token selection. This adaptive mechanism lets the model stay grounded when it counts most.

A key part of the framework is the visual anchor injection mechanism. During uncertain decoding steps, LEAD feeds visual reference points back into the process, pulling the model toward outputs that are better aligned with the original image. Token distributions evolve across stages in a way that actively resists the drift that causes hallucinations.

Why Training-Free Methods Like LEAD Matter for Real-World AI

The design choice to avoid retraining is significant. Most approaches to reducing hallucinations require modifying model weights or building entirely new pipelines. LEAD bypasses this entirely, which means it can slot into existing multimodal systems without major overhead. As AI systems take on increasingly autonomous real-world tasks - from analyzing documents to processing visual data - outputs that contradict source material carry real consequences.

The broader trend here is toward reliability at inference time rather than reliability baked in through training. LEAD fits that direction well. Alongside other recent advances - like the StitchCUDA framework achieving 273x GPU speedups - it points to a wave of optimization happening at the systems layer, not just the model layer. For teams deploying multimodal AI in production, decoding-stage interventions like this offer a low-friction path to meaningfully better outputs.

Peter Smith

Peter Smith

Peter Smith

Peter Smith