Google and OpenAI are currently setting the pace in AI image model performance. The latest Design Arena benchmark evaluation covering more than 60 models puts both companies at the top across every major visual AI category, from raw image generation to graphic design tasks.

Gemini 3.1 Flash and ChatGPT-Image Top 4 Benchmark Arenas

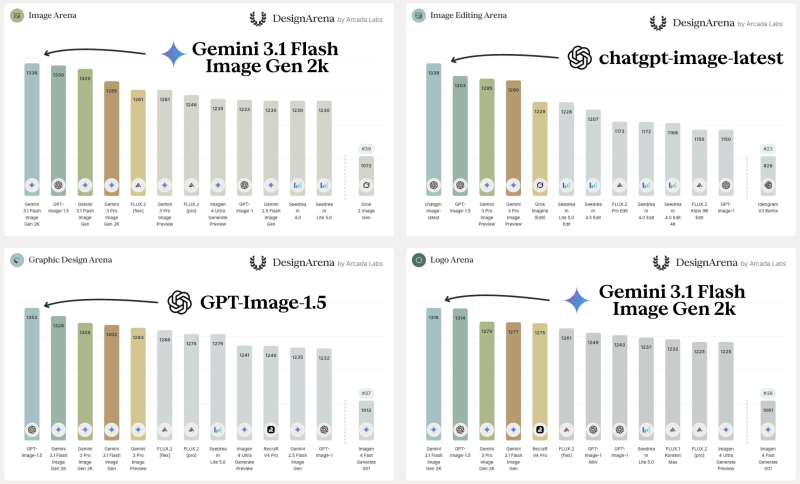

The results show category-specific leadership rather than one model dominating everything. Google's Gemini 3.1 Flash Image Gen 2K ranked first in both the Image Arena and Logo Arena. OpenAI's chatgpt-image-latest took the top spot in Image Editing, while GPT-Image-1.5 leads the Graphic Design Arena. Both companies occupy multiple top placements across all four arenas, pointing to a broad, competitive push across every segment of visual AI.

Multimodal AI Benchmarks Are Shaping the Competitive Landscape

The benchmark results come as both companies accelerate model iteration cycles. Recent progress shows Gemini 3 Flash scoring 0.867 in half-life prediction on ScienceAI Bench, while separate results placed GPT-5.4 Mini at 72.1 on OSWorld alongside Gemini 3 Flash hitting 90.4 on GPQA Diamond. Efficiency and reasoning gains are feeding directly into image performance.

The Design Arena data makes one thing clear: multimodal capabilities are now a primary competitive front. As Gemini 3 Flash RSA achieved 59.31 on ARC-AGI at lower compute cost, the race is no longer just about raw quality but also efficiency at scale. For developers and creative teams, benchmark rankings across image generation, editing, and design are increasingly informing which models get integrated into real workflows. Google Stitch's addition of Gemini 3 Flash for faster design work is one early example of how benchmark performance translates into product decisions.

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova