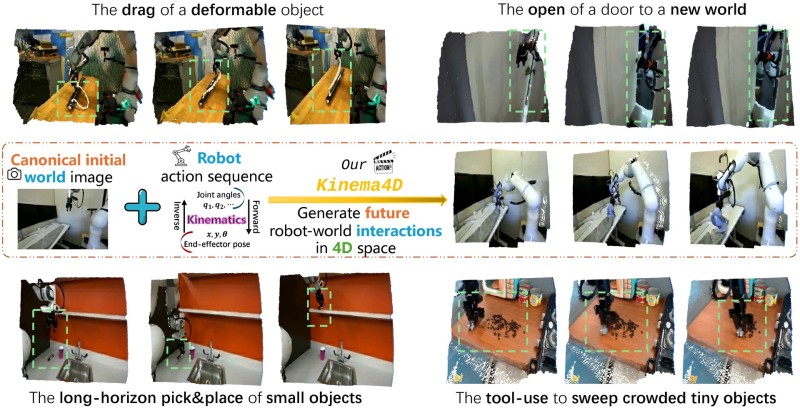

⬤ Researchers have introduced Kinema4D, an action-conditioned 4D generative robotic simulator that separates precise kinematic control from environmental dynamics. Reported by DailyPapers, the system enables physically plausible simulation of complex robot-world interactions by modeling motion and environment as distinct but coordinated processes.

⬤ The framework generates future robot-world interactions across spatial and temporal dimensions. It decouples robot motion (joint angles, end-effector positioning) from environmental responses, allowing more accurate real-world physics modeling. Demonstrated tasks include deformable object manipulation, door opening, and long-horizon pick-and-place operations - scenarios demanding high interaction consistency.

⬤ Kinema4D also handles tool-use tasks - such as sweeping clustered small objects - highlighting its value for multi-step robotic workflows. To support training at scale, the team released Robo4D-200k, a large-scale dataset covering varied robotic tasks. This enables high-fidelity simulation and opens the door to zero-shot generalization across unseen environments.

⬤ The release reflects a broader push in AI-driven robotics to close the gap between simulated training and real-world deployment. Advances in 4D modeling could meaningfully accelerate automation, embodied AI research, and complex task execution in industrial and service robotics environments.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi