Getting AI models to render legible, structurally correct text inside generated images has been one of the field's most stubborn problems. Researchers at Huazhong University of Science and Technology, in collaboration with ByteDance, have now introduced TextPecker, a plug-and-play training framework that directly tackles this gap. The results are hard to ignore: an 8.7% improvement in semantic alignment and a 4% gain in structural fidelity for Chinese text rendering in Qwen-Image, pushing the approach to a new state-of-the-art for visual text generation.

How TextPecker Fixes Structural Distortion in AI-Generated Text

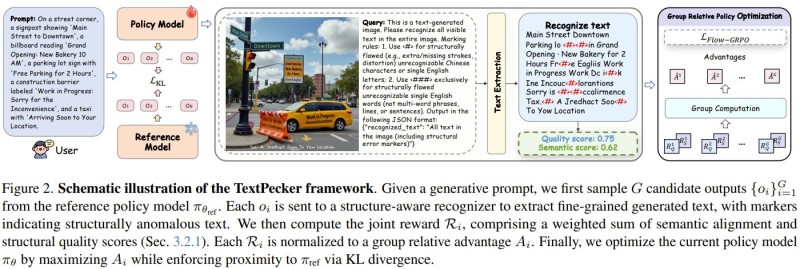

TextPecker works by training image models to recognize and self-correct their own character-level mistakes. The framework introduces a specialized dataset annotated with specific structural errors, covering issues like missing strokes, misaligned components, and garbled characters.

It also includes a mechanism for generating diverse error cases on the fly, ensuring that models are exposed to a broad range of failure modes during training. Because it is designed as a faster and more efficient image generation add-on rather than a full model replacement, TextPecker can be integrated into existing text-to-image pipelines without architectural overhaul.

Real-World Impact: From Design to Advertising

The practical upside extends well beyond benchmark scores. Accurate text in generated images is a hard requirement in design, advertising, and content automation workflows, where misrendered characters can make assets unusable. TextPecker's measurable accuracy gains mirror the trajectory seen with Qwen-Image's latest speed and efficiency improvements, suggesting that the Qwen ecosystem is converging on both faster inference and higher output quality simultaneously. This dual focus on reliability and performance is increasingly a competitive baseline in multimodal AI development.

The broader trend is consistent: improving output quality is now a central priority alongside scaling. As multi-agent AI systems outperform single-model approaches in complex tasks, the field is learning that specialization and targeted correction, rather than brute-force scaling alone, drive the most reliable real-world gains. TextPecker is a clear example of that pattern applied to a very specific and commercially meaningful problem.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi