⬤ Researchers from HKUST, Northwestern Polytechnical University, and Knowin introduced LatentMorph, an AI framework that integrates reasoning directly into text-to-image generation. Instead of relying on explicit textual reasoning steps, the system performs continuous refinement inside latent space, allowing the model to adapt its creative process in real time without breaking generation coherence.

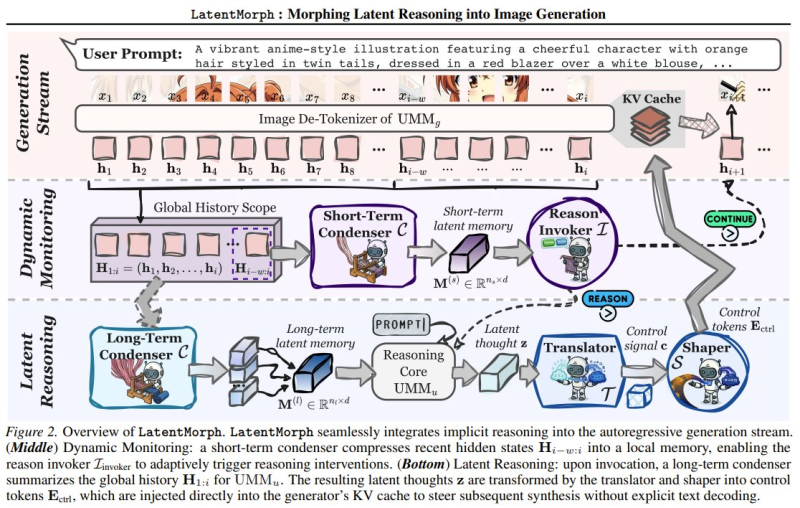

⬤ The architecture uses a multi-stage pipeline featuring a short-term condenser, long-term condenser, reasoning core, translator, and shaper. These components work together to monitor generation history and invoke reasoning only when needed. Rather than producing explicit reasoning tokens, the model guides output through latent thought representations, maintaining contextual memory throughout the process. This mirrors broader efforts to steer model behavior without disrupting coherence, as explored in New AI Method Uses 1 Billion Activations to Control LLM Behavior Without Breaking Coherence.

⬤ Benchmark results show LatentMorph raises generation scores by up to 25% and outperforms explicit reasoning methods by 15% on abstract tasks. On the efficiency side, inference time drops by 44% and token consumption falls by 51%. The framework also reached 71% cognitive alignment with human intuition, suggesting its latent reasoning mirrors human creative thinking more closely than prior approaches. Related work on reducing model errors through adaptive learning appears in New Method Cuts LLM Coding Errors by 40% With Adaptive Training Approach.

⬤ LatentMorph reflects a wider push to embed reasoning into generative models without sacrificing compute efficiency. As text-to-image systems grow more capable, architectures that reason inside latent representations could reshape how next-generation generative AI is built, making implicit reasoning a standard design pattern rather than an experimental addition.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi