A new study on LLM-based multi-agent systems is challenging a common assumption in AI development: that adding more agents automatically means better results. The research, titled "Understanding Agent Scaling in LLM-Based Multi-Agent Systems via Diversity," finds that agent diversity is a stronger driver of performance than raw numbers. The timing matters - 79% of enterprises now deploy AI agents as adoption moves well past the pilot stage.

Why Identical Agents Hit a Wall

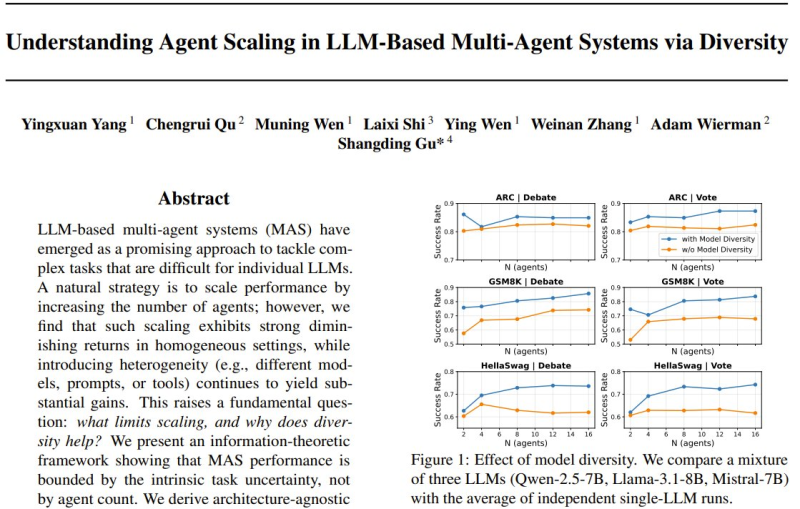

The study tested multi-agent setups across reasoning benchmarks including ARC, GSM8K, and HellaSwag, using both collaborative debate and voting mechanisms. When all agents share the same model, they tend to produce correlated responses - meaning more agents simply repeat the same reasoning, not extend it.

Performance gains plateau fast. Adding the 10th or 16th clone contributes almost nothing new to the system's collective intelligence. This mirrors a broader push for smarter AI infrastructure, as seen in CAS unveiling LightRetriever for 1000x faster LLM search.

How Model Diversity Unlocks Independent Reasoning

The researchers tested heterogeneous setups mixing Qwen-2.5-7B, Llama-3.1-8B, and Mistral-7B - and results shifted dramatically. Different models, prompts, and reasoning strategies create independent information channels, giving the system genuinely new signals to work with. Heterogeneous groups consistently outperformed homogeneous ones across tasks.

For teams building agentic pipelines, the implication is practical: prioritize model and prompt diversity over headcount. Two well-chosen, distinct agents can outperform a fleet of sixteen clones. This could reshape how collaboration frameworks are designed for reasoning, automation, and retrieval tasks - a shift already visible in findings like the GPT-5 Shadow API study revealing 47% performance gaps across real-world AI deployments.

Usman Salis

Usman Salis

Usman Salis

Usman Salis