Alibaba Group Holding Ltd. (BABA) has quietly but confidently expanded its AI lineup. The newly released Qwen 3.5 Small Model Series includes four variants: 0.8B, 2B, 4B, and 9B. Built on the Qwen3.5 foundation, the series brings native multimodal capabilities to smaller, more efficient architectures designed for edge deployment and research use.

The goal, as Alibaba frames it, is straightforward: deliver models that are smart yet lightweight. The two smallest variants, 0.8B and 2B, are aimed squarely at fast, resource-constrained environments where full-scale cloud inference isn't practical.

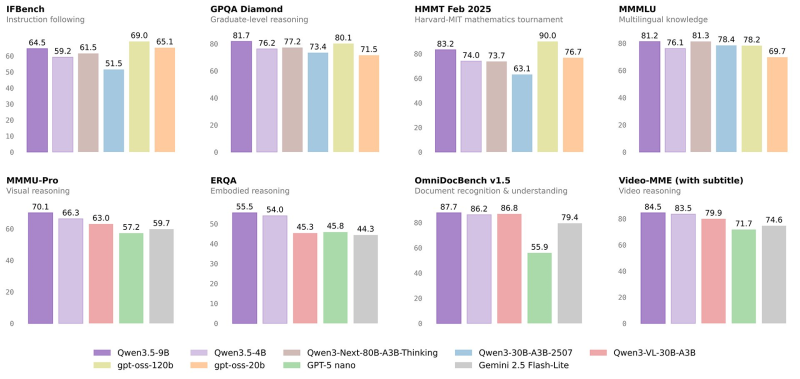

90.0 in Math, Mid-80s in Reasoning: What the Benchmarks Show

The numbers are hard to ignore. The Qwen3.5-9B model posted competitive results across instruction following, graduate-level reasoning, and Harvard-MIT mathematics tasks. The highest math benchmark score came in at 90.0, with other reasoning categories landing in the mid-70s to low-90s. For a model in this size class, those are strong results. Full benchmark coverage is available here, breaking down how the Qwen3.5 family compares across each category.

Smart yet lightweight - that's the promise Alibaba is putting behind the Qwen 3.5 Small Series, and the benchmarks suggest it's not just marketing.

Why Efficient Models Matter More Than Ever for BABA

This release fits into a broader pattern for Alibaba's AI team. The Qwen researchers have been active on multiple fronts, with recent work including SiameseNorm, a 13B parameter model showing notable training efficiency gains. Meanwhile, the overall Qwen model family has been climbing the charts, as seen in recent rankings where Alibaba's Qwen models swept AI leaderboards with over 800 likes.

The strategic logic is clear. As AI capability expands beyond large cloud-only systems into industrial, edge, and enterprise settings, having a full model spectrum matters. For BABA, showing that its smaller models can still deliver on reasoning, math, language, and multimodal tasks strengthens its position across both cloud AI services and next-generation compute platforms.

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova