A new open-source project called code-review-graph is catching serious attention from developers struggling with one of AI coding's most persistent headaches: context inefficiency. The tool replaces brute-force repository scanning with a structured graph-based system, cutting token consumption dramatically.

Why Claude Code Wastes Tokens on Large Codebases

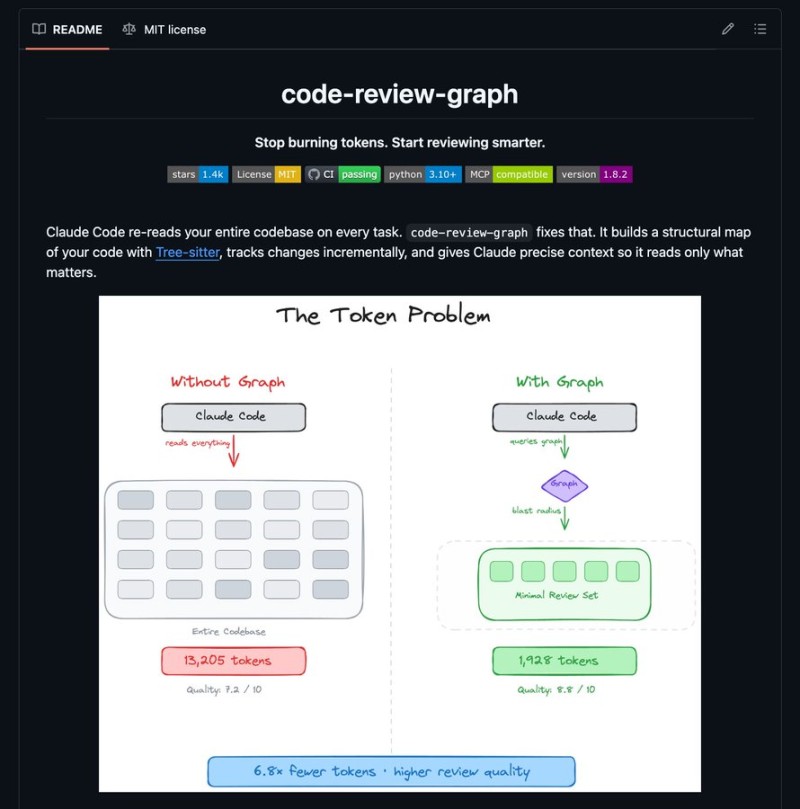

The root problem is simple but costly. Large codebases contain thousands of files that won't fit into a model's context window, so Claude Code typically reads partial data and fills the gaps with assumptions -- which leads to inaccurate outputs. code-review-graph fixes this by parsing the entire repository with Tree-sitter and mapping functions, classes, imports, and dependencies into a persistent SQLite-based structure.

When a file changes, the system performs blast-radius analysis to identify only the directly affected components instead of scanning the entire codebase.

49x Fewer Tokens, Better Review Quality

The efficiency numbers are hard to ignore. According to the release data, the tool reduces token usage by up to 49x in large monorepos and around 6.8x in standard review workflows. A direct comparison shows the drop from roughly 13,205 tokens in a full-codebase scan to just 1,928 tokens with the graph-based approach -- alongside an improvement in review quality. The system can also reindex approximately 2,900 files in two seconds and supports 12 programming languages.

This fits into a broader pattern in AI development: as Claude AI usage shifts toward experienced users driving adoption, the main bottleneck is no longer model capability -- it's efficient context handling.

Industry data supports this. Effective usage, not just access, plays a key role in performance outcomes as AI adoption scales. Tools like code-review-graph address exactly that gap -- giving models only the code that matters. For teams working in large monorepos, this kind of precision means lower costs and more reliable outputs.

With Claude Opus 4.6 already scoring 78.3% on MRCR v2 with a 1M token context window, pairing expanded context capacity with smarter token management like code-review-graph could become a standard workflow for serious engineering teams.

Saad Ullah

Saad Ullah

Saad Ullah

Saad Ullah