⬤ AI platform Unsloth has launched Unsloth Studio, an open-source web interface for training and running large language models locally. As Unsloth AI reported, the system ditches PyTorch's default autograd in favor of custom backpropagation kernels written in OpenAI's Triton language - part of a broader push toward verifiable on-chain AI infrastructure that's reshaping how models are built and deployed.

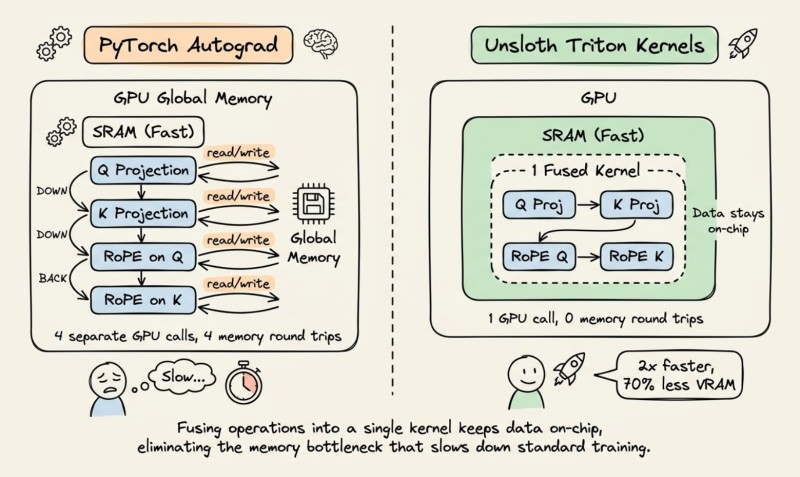

⬤ The headline engineering gain comes from kernel fusion. Standard PyTorch workflows execute operations like Q/K projections and rotary position embeddings as separate GPU calls, forcing repeated memory round trips between SRAM and global memory. Unsloth collapses these into a single fused kernel, keeping data on-chip throughout. The result is over 2x faster training and up to 70% lower VRAM consumption, with no compromise on accuracy.

⬤ Beyond raw speed, Unsloth Studio ships a no-code interface that handles the full training workflow. It includes sandboxed code execution for running Python and bash scripts, a self-healing tool-calling system that retries failures automatically, and support for GRPO reinforcement learning - which removes the need for a separate critic model, trimming VRAM further compared to PPO-based approaches. This kind of integrated tooling mirrors the rise of autonomous AI agent workflows that automate multi-step processes end to end. The platform also handles dataset creation from PDFs and CSVs, supports multi-format model export, and runs entirely offline.

⬤ The release fits a wider pattern of efficiency-focused AI tooling gaining ground. Performance optimization, lower hardware requirements, and tighter integrated workflows are fast becoming the real competitive edge - and Unsloth Studio positions itself squarely at that intersection.

Victoria Bazir

Victoria Bazir

Victoria Bazir

Victoria Bazir