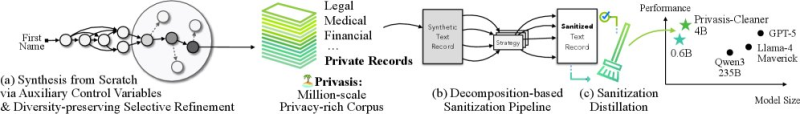

NVIDIA has quietly pushed out one of the more practical AI datasets in recent memory. Privasis is a synthetic, million-scale collection of private-style records - think medical files, financial documents, and legal contracts - purpose-built to help train models that can identify and sanitize sensitive text. As reported by DailyPapers, the dataset is publicly available on Hugging Face and comes annotated with roughly 54 million privacy attributes across its entire corpus.

The idea here is straightforward but important. Teaching an AI to handle sensitive information responsibly requires a lot of labeled examples - and real-world data is off-limits for obvious reasons. Privasis sidesteps that problem entirely by generating synthetic records that mirror the structure and sensitivity patterns of genuine documents, without exposing any actual personal data in the process.

54 Million Privacy Attributes and a Decomposition Pipeline

What makes Privasis genuinely useful is how it is structured. Each record in the dataset is tagged with privacy attributes that help models understand not just where sensitive data appears, but what category it falls into - whether that is a patient name, a bank account number, or a legal clause.

That granularity matters when you are trying to build systems that can sanitize text without destroying its overall meaning or utility.

This approach allows models to learn how to sanitize text while preserving usability, reflecting broader concerns around data security.

The release also includes a detailed architectural framework that breaks sanitization into stages. Synthetic text records are fed into a decomposition-based pipeline that strips out or transforms sensitive content before producing a clean output. Distillation techniques are layered on top to keep the resulting models efficient - a deliberate nod to the real-world constraint that these systems often need to run at scale without burning through compute. This modular approach mirrors trends seen in multi-agent AI systems outperforming single models on complex tasks.

Why Privacy-Preserving AI Datasets Like Privasis Are Getting More Attention

The timing of this release is not accidental. As AI systems push deeper into healthcare, finance, and law, the pressure to handle confidential data responsibly has intensified sharply.

Tools that enable secure handling of confidential data are becoming a critical component of scalable AI deployment.

There have been growing concerns about model exposure to sensitive inputs - from AI-powered malware hitting blockchain developers to broader regulatory scrutiny over how enterprises manage data flows inside AI pipelines.

NVIDIA Privasis Dataset: Key Features at a Glance

- Million-scale synthetic dataset covering medical, financial, and legal document types

- Approximately 54 million annotated privacy attributes for fine-grained sensitive data detection

- Decomposition-based sanitization pipeline for converting raw records into clean outputs

- Distillation techniques built in to maintain model efficiency at scale

- Publicly available on Hugging Face with no access to real personal data

All of this lands in a broader context where major infrastructure players are racing to make AI deployment safer and more enterprise-ready. Initiatives like NVIDIA and Microsoft driving AI expansion into new energy and compute sectors underscore just how much capital is flowing into the space - and why tools like Privasis, which address the compliance and security side of that expansion, are increasingly seen as foundational rather than optional.

Peter Smith

Peter Smith

Peter Smith

Peter Smith