⬤ Researchers from Meta's Fundamental AI Research (FAIR) group and New York University shared new insights into native multimodal pretraining, exploring how AI systems can move beyond language-only training toward unified models that integrate visual perception, generation, and language reasoning within a single architecture. The scope of AI's expanding role is also visible in scientific research, where AI systems can screen 10 trillion drug-protein combinations in just 24 hours.

⬤ At the core of the study are Representation Autoencoders (RAEs), designed to unify visual understanding and generative capabilities. RAEs allow models to interpret visual inputs while simultaneously generating outputs, effectively bridging the gap between perception and generation. The research also highlights that vision and language datasets are complementary, meaning models trained across both modalities build stronger, more general representations.

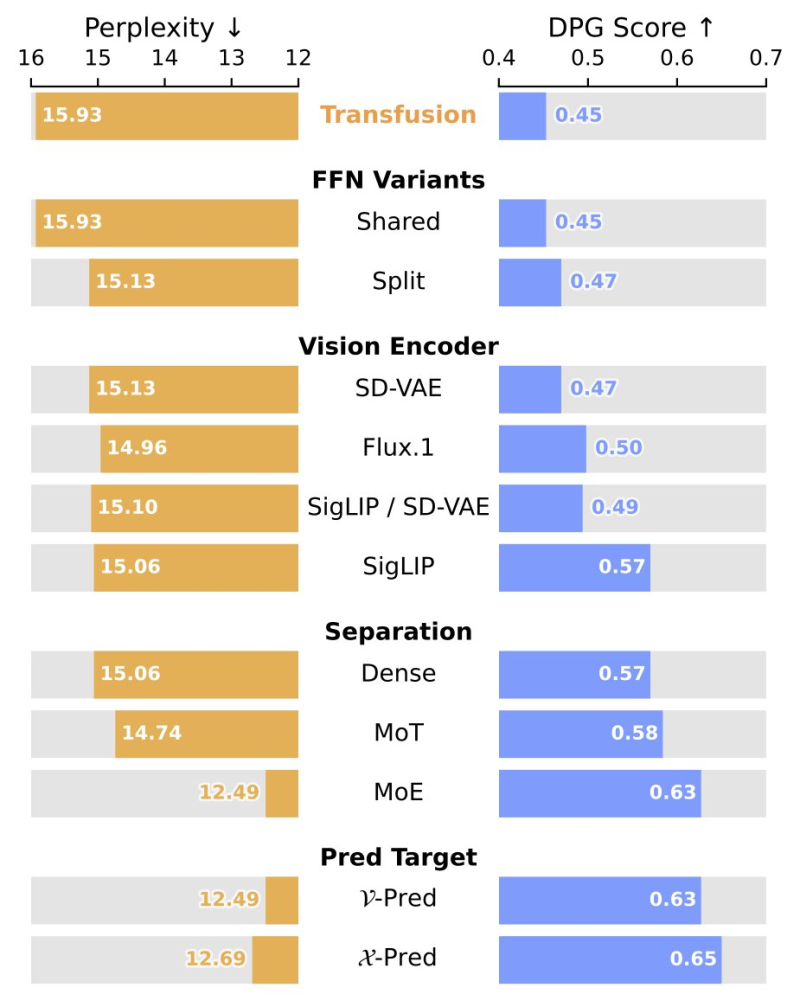

⬤ Visual benchmarks in the research show how architectural choices directly shape performance. Experiments compare feed-forward variants (Shared and Split), vision encoders including SD-VAE, Flux.1, and SigLIP, and separation strategies such as Dense, MoT, and MoE. Among these, the MoE (Mixture-of-Experts) configuration delivers the strongest scores across perplexity and DPG metrics, while prediction targets like v-Pred and x-Pred reveal further performance differences. Parallel industry progress is reflected in ByteDance Doubao 2.0 Pro launching its expert mode AI model.

⬤ The findings suggest that unified multimodal pretraining could enable models to develop early-stage world modeling abilities, allowing them to reason across visual and textual environments more effectively. By pairing RAEs with scalable architectures like MoE, the research maps a credible path toward more general multimodal AI. As capabilities grow, broader questions around system behavior and governance become more urgent, themes explored in AI behavior concerns raised after Economics of AGI research.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi