For years, the gap between open-source and closed commercial AI felt almost structural — something baked into the economics of compute and data. GLM-5 is making a strong argument that gap is closing. Developed by Zhipu AI and Tsinghua University, this 744 billion parameter open-weights model doesn't just compete on paper. It's posting numbers that put it in the same conversation as GPT-5 and Claude Opus 4.5, without the paywall.

First Open Model to Score 50 on the Intelligence Index v4.0

GLM-5 uses a mixture-of-experts architecture with roughly 40 billion parameters active at runtime, supports 200,000 token context windows, and is built around long-horizon autonomous task execution — planning, coding, testing, debugging, and iterating without human hand-holding. That's a meaningful shift from what most open models have been able to do reliably.

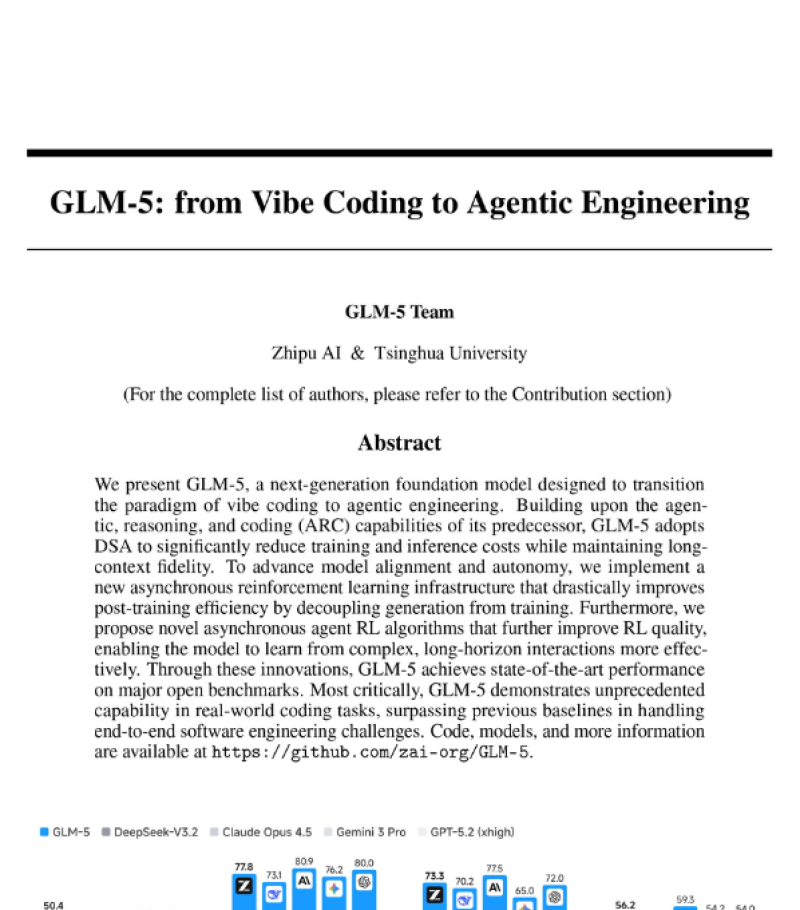

On benchmarks, the results are hard to dismiss. GLM-5 is the first open model to hit a score of 50 on the Artificial Analysis Intelligence Index v4.0. On LMArena, which uses human comparative judgment across thousands of real interactions, GLM-5 cracked the Top 10 with over 6,000 user votes and ranks as the top open model in both text and coding. That puts it alongside Gemini 3 Pro and Claude Opus 4.5 in categories where open models rarely show up near the top.

Trained on 28.5 Trillion Tokens With Sparse Attention for Long Contexts

The training process behind GLM-5 is as interesting as the results. The model was trained on an estimated 28.5 trillion tokens using a staged asynchronous reinforcement learning framework that decouples inference from training — cutting down idle GPU time and making the pipeline more efficient at scale. Sparse Attention mechanisms reduce redundant computation in long sequences, which matters when you're regularly handling 200K-token contexts.

In coding-specific evaluations, the model trades blows with proprietary giants. GLM-5 scored 73.64 in coding on LiveBench, outperforming GPT-5.1 Codex in that category. At the same time, it trails by 18.6 points on a real-world AI coding benchmark, a reminder that benchmark diversity still reveals meaningful gaps depending on task type.

What makes GLM-5 worth watching isn't just the scores — it's the combination of open weights, flexible hardware compatibility, and genuine autonomous capability. Open-source AI has often meant trade-offs. GLM-5 is narrowing how many trade-offs you have to accept.

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova