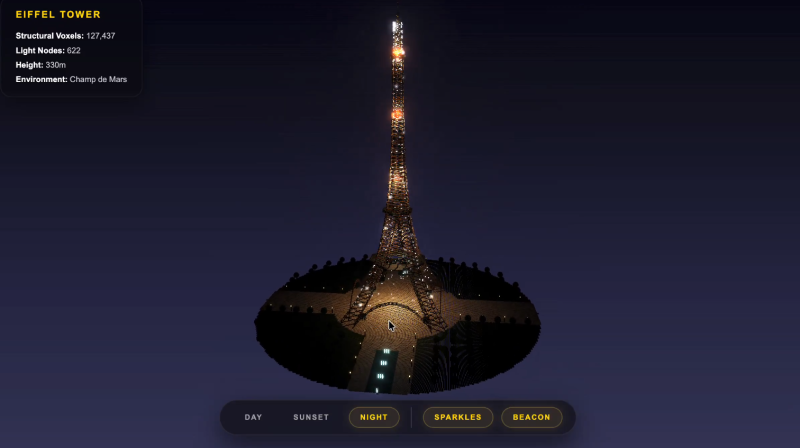

⬤ Google's Gemini 3.1 Pro High turned heads recently when it generated a strikingly detailed voxel art rendering of the Eiffel Tower. For many, it was the best visual output they'd seen from the model. The excitement didn't last long - users quickly ran into timeouts, reasoning errors, and an overall clunky experience that made it hard to actually enjoy what the model could do.

⬤ The issues weren't minor. Gemini's app reasoning performance was described as subpar, with persistent timeouts hitting features like "antigravity" and the AI Studio app-building section buckling under real-world load. Repeated failures made it genuinely difficult to tap into the model's generative strengths, including its advanced image handling and context generation.

⬤ This gap between capability and reliability isn't unique to Gemini. But the numbers tell an interesting story. Gemini 3.1 Pro topped key intelligence benchmarks at 56M tokens versus rivals processing up to 130M - strong numbers on paper. Meanwhile, Gemini Deep Think hit 90 on the proof reasoning benchmark with Aletheia, showing the backend is clearly doing something right. The problem is that backend gains aren't translating cleanly to the frontend experience.

⬤ For Gemini 3.1 Pro High to compete seriously with NVDA-backed models and Microsoft's enterprise platforms, Google will need to close this gap. Users building creative projects or development workflows aren't just scoring benchmarks - they need tools that hold up under pressure. Right now, the model's ceiling is impressive, but the floor still needs work.

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova