⬤ Researchers from Northwestern, Stanford, University of Washington, and Cornell have introduced the "Theory of Space," a framework that explains how AI agents build spatial understanding from partial, incomplete observations. Rather than relying on full scene data, the system maps how models actively explore environments and construct internal cognitive maps - step by step - to represent their surroundings. The implications reach well beyond robotics, touching any AI system that has to reason about space.

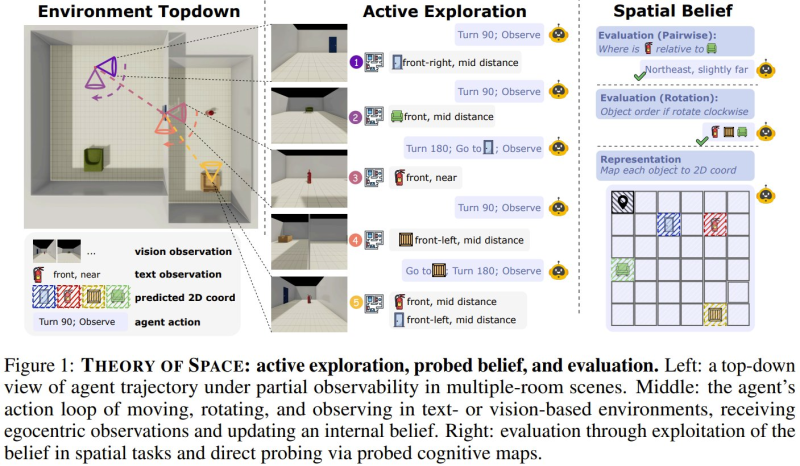

⬤ The framework describes an active exploration loop where an agent moves, rotates, and observes its environment to refine its spatial belief over time. Vision-based inputs and textual reasoning work together to estimate object positions and relationships. This enables tasks like identifying relative positions, tracking object order under rotation, and assembling 2D spatial layouts from fragmented data - all without access to a complete map. It is the same challenge that makes multi-agent AI systems with specialized roles outperform single-model architectures when persistent spatial context is required.

⬤ The study identifies 3 core limitations holding current AI back. The "Active-Passive Gap" reveals that models perform far better when information is handed to them than when they have to discover it through exploration. Researchers also found inefficient search strategies and a problem they call "Belief Inertia" - where models hold onto outdated internal representations even after receiving new, contradicting observations. These are exactly the kinds of long-context reasoning failures that Claude Opus 4.6 tackled by generating a 10,000-line video editing application as a benchmark for sustained structured reasoning.

⬤ These findings land at a moment when the industry is racing to close reasoning and memory gaps across agent architectures. The belief persistence problem identified by the Theory of Space is precisely what IBM's new agent memory system directly targets, boosting task success rates by 149%. Together, spatial reasoning, memory, and coordination are converging as the defining challenges for the next generation of AI agents.

Peter Smith

Peter Smith

Peter Smith

Peter Smith