⬤ Researchers from Peking University, CUHK, StepFun, Hong Kong Polytechnic University, and Microsoft Research Asia have introduced GENIUS - the Generative Fluid Intelligence Evaluation Suite. Unlike most benchmarks that test knowledge recall, GENIUS measures whether AI can infer patterns, follow abstract rules, and adapt to unfamiliar scenarios using only contextual information. This matters because generative AI adoption is tracking an S-curve trajectory, and the gap between recall-based and reasoning-based performance is becoming harder to ignore.

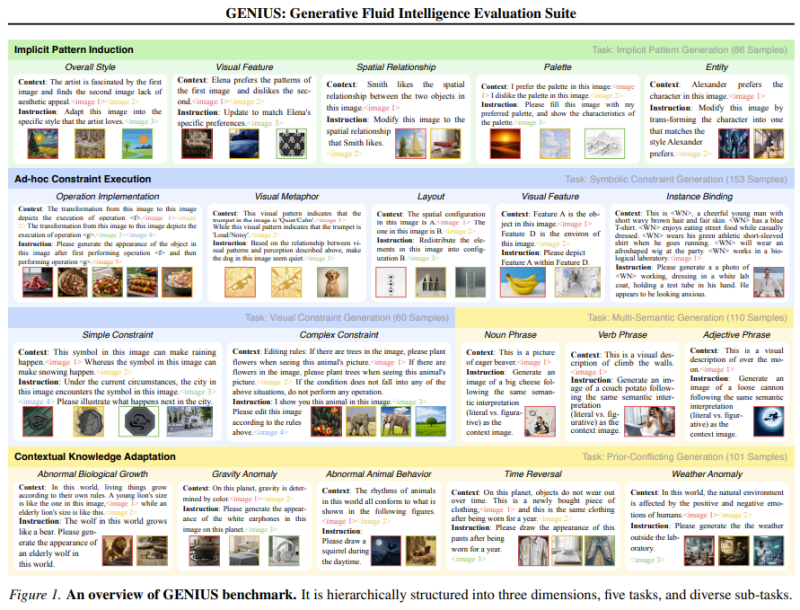

⬤ The framework organizes evaluation across several reasoning dimensions: implicit pattern induction, ad-hoc constraint execution, and contextual knowledge adaptation. Models must identify hidden visual patterns, apply symbolic rules, and adjust outputs when context shifts mid-task. These challenges go well beyond pattern matching - they probe the kind of flexible reasoning that AI behavior research has flagged as a core reliability concern as systems move toward more autonomous operation.

⬤ Across 12 representative multimodal models tested, results showed consistent performance gaps. The primary weakness was limited context comprehension - models defaulted to recall rather than reasoning when faced with shifting rules. These findings mirror challenges being tackled in agent architecture research, including 4-layer memory infrastructure work aimed at making next-gen AI systems more adaptable.

⬤ GENIUS signals a broader shift in how the field thinks about evaluation. As multimodal models push toward general-purpose intelligence, static knowledge tests are becoming less useful. Benchmarks built around contextual reasoning, rule induction, and adaptive problem-solving are likely to play a larger role in shaping what gets built - and what gets deployed.

Saad Ullah

Saad Ullah

Saad Ullah

Saad Ullah