⬤ ByteDance Seed, together with the Institute for AI Industry Research (AIR) at Tsinghua University, has introduced CUDA Agent — a large-scale agentic reinforcement learning system designed to generate high-performance CUDA kernels. The project focuses on real GPU execution speed rather than just producing compilable code. This performance-first approach reflects broader shifts discussed in AI Leadership: Claude Opus 4.6 Tops Benchmarks, Capability Doubling Time Drops to 4 Months, where benchmarks increasingly track measurable capability gains.

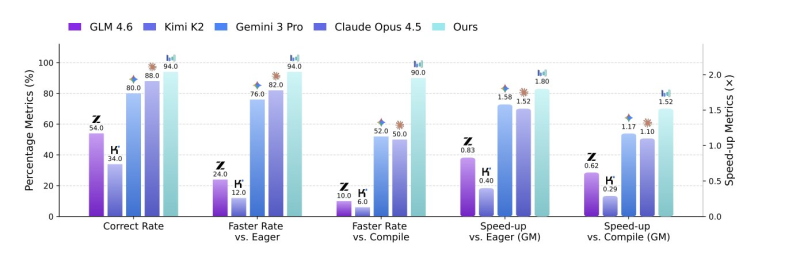

⬤ Benchmark data compares CUDA Agent against GLM 4.6, Kimi K2, Gemini 3 Pro, and Claude Opus 4.5. CUDA Agent achieved a 94% correct rate and substantial speed gains — reaching 100%, 100%, and 92% faster rates over torch.compile on KernelBench Level-1, Level-2, and Level-3 tasks respectively. On the hardest Level-3 setting, it outperformed Claude Opus 4.5 and Gemini 3 Pro by roughly 40%, showing strong optimization on complex GPU workloads.

⬤ The core innovation is in the reward mechanism. Instead of rewarding code that simply compiles, CUDA Agent trains on actual GPU profiling data. The framework combines automated verification, performance profiling, and reinforcement learning to optimize kernels around hardware factors like warps, memory bandwidth, and bank conflicts. This mirrors optimization strategies covered in How AI Agents Cut Token Use by 83% Through Shared Intelligence.

⬤ The timing is notable. GPU demand remains elevated while supply constraints continue shaping the market, as detailed in Zotac Warns GPU Shortages Could Push Prices Higher Through 2026. Performance-driven automation in CUDA kernel generation could meaningfully shift how high-performance computing workflows are built — especially where hardware efficiency and cost control are becoming decisive factors.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi