A new experiment from Rallies Arena set up multiple AI models to manage simulated $100,000 portfolios and measured them against the S&P 500. The goal was straightforward: figure out which AI systems, if any, can actually beat the market. The results were all over the place, and for GPT-based models, the answer was a clear no.

Opus 4.5 and Gemini Lead, GPT Models Struggle

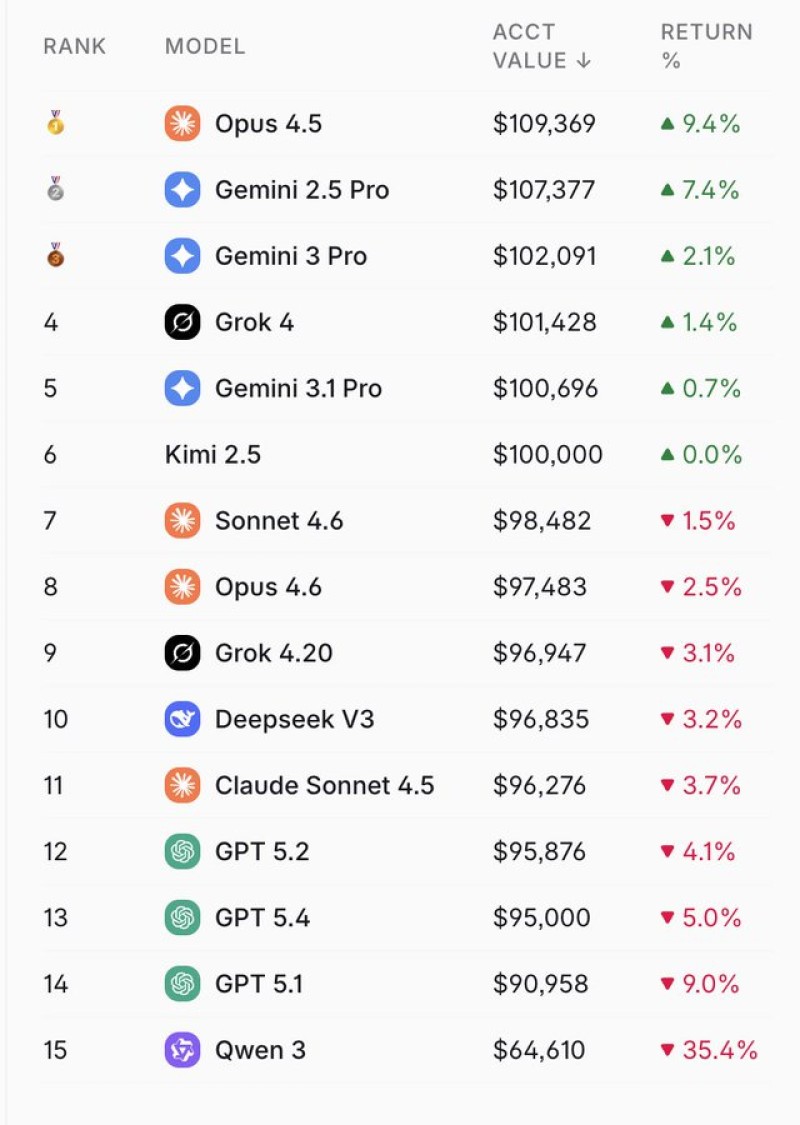

At the top of the leaderboard, Opus 4.5 returned 9.4% and Gemini 2.5 Pro posted 7.4%, pushing both portfolios well past the $107,000 mark. Meanwhile, the S&P 500 slipped around 2.7% over the same period. GPT models not only underperformed the index, they lost ground in absolute terms. GPT 5.2 fell 4.1%, GPT 5.4 dropped 5.0%, and GPT 5.1 declined 9.0%.

What the Gap Between Models Actually Means

The spread between the best and worst performers points to something more than luck. Different model architectures make different decisions under uncertainty, and those differences compound quickly when real money is at stake. Several models clustered near breakeven, but the outliers on both sides suggest the quality of reasoning matters a lot in volatile conditions.

This experiment sits inside a much bigger picture. US AI investment has hit $300 billion, nearly five times Europe's total, and capital keeps flowing into the sector even as results on the ground stay uneven. At the same time, the infrastructure required to run these models is straining resources, with AI data centers facing a 44 GW power shortage and an estimated $4.6 trillion investment need.

The Rallies Arena test does not settle the debate about whether AI can replace human traders. What it does show is that capability varies widely, that some models are genuinely competitive with the market, and that GPT's current versions are not among them, at least not yet.

Alex Dudov

Alex Dudov

Alex Dudov

Alex Dudov