ModelScope's latest release is shaking up the open-source AI landscape. The company just dropped Step-3.5 Flash, a massive language model that's now freely available to developers and researchers worldwide. What makes this release particularly interesting is the combination of raw performance and accessibility - the model comes bundled with full weights and the training framework needed to fine-tune it for specific use cases.

Sparse Architecture Delivers 350 Token/Second Peak Performance

The technical specifications behind Step-3.5 Flash reveal a sophisticated approach to balancing power and efficiency. The model packs 196 billion total parameters, but here's the clever part - only about 11 billion parameters activate during inference. This sparse mixture-of-experts design uses 288 routed experts plus one shared expert, with top-8 expert activation per token. The result is a system that maintains competitive performance while keeping computational costs manageable.

Speed is where this model really shines. Using a multi-token prediction method called MTP-3, Step-3.5 Flash generates multiple tokens in a single forward pass, typically churning out between 100 and 300 tokens per second. Peak speeds can hit around 350 tokens per second depending on your hardware setup. The model also handles extended context through a 256K context window, using hybrid sliding-window attention to keep things efficient even with longer sequences.

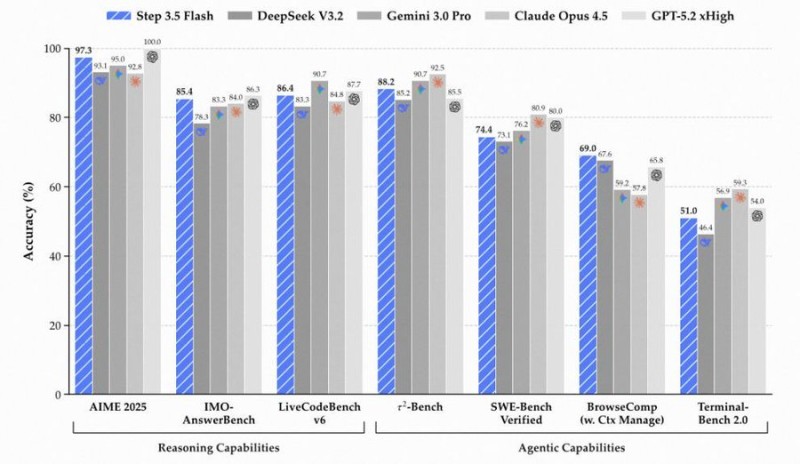

Benchmark Results Show Competitive Coding Capabilities

The numbers tell an impressive story. Step-3.5 Flash scored 74.4% accuracy on SWE-bench Verified and 51.0% on Terminal-Bench 2.0, putting it in the same league as other heavyweight models designed for software engineering tasks. The comparison chart released alongside the model shows it holding its own against systems like DeepSeek V3.2, Gemini 3.0 Pro, Claude Opus 4.5, and GPT-5.2 xHigh across benchmarks including AIME 2025, IMO-AnswerBench, LiveCodeBench v6, and r²-Bench.

Released under the permissive Apache 2.0 license, Step-3.5 Flash represents another milestone in the rapidly evolving open-source AI ecosystem. The inclusion of the complete SteptronOSS training framework for supervised fine-tuning, continued pretraining, and reinforcement learning workflows means developers can actually adapt this model to their specific needs rather than just using it as-is. As open-source models continue to close the performance gap with proprietary systems, the competitive dynamics in AI development are shifting fast.

Peter Smith

Peter Smith

Peter Smith

Peter Smith