Large language models are getting smarter, not through bigger datasets or more parameters, but through better reasoning strategies. Atom of Thought (AoT), a novel prompting method, is outperforming the traditional Chain of Thought approach across major AI models, delivering accuracy improvements of 35-42% on complex tasks. This shift suggests that how we structure AI reasoning matters as much as the underlying model architecture itself.

What Makes Atom of Thought Different From Chain of Thought

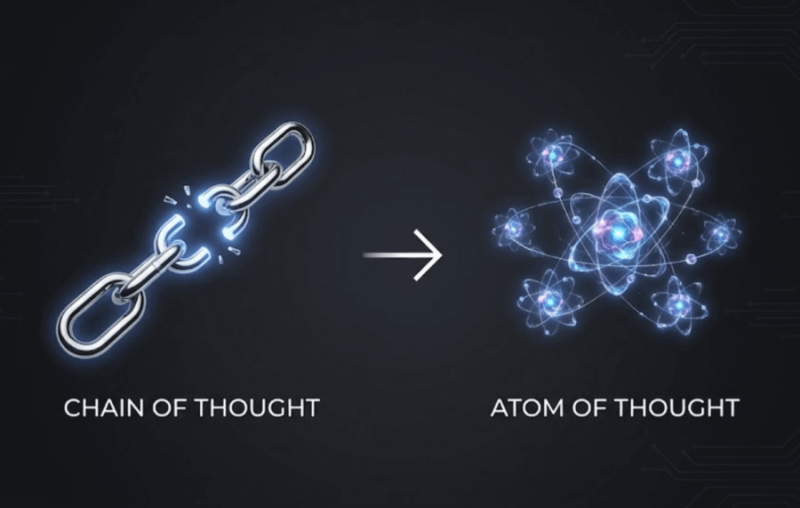

Chain of Thought prompting has long guided models through problems step-by-step in a linear sequence - Step 1, Step 2, Step 3 - like a student showing their work. Atom of Thought takes a different approach by breaking problems into independent reasoning units that are validated separately before being combined. This means errors in one segment don't compromise the entire solution.

The simplicity of the AoT prompt stands out: "Decompose this problem into independent logical components. Validate each one. Then synthesize your final answer." This structural change alone produces remarkable results - roughly 35% accuracy gains on math benchmarks, 42% improvements on complex logic tasks, and 40% better bug detection in code reasoning. These percentage improvements were observed across multiple AI architectures without any model retraining.

35-42% Accuracy Improvements Signal Industry Shift

The reported benchmark results challenge assumptions about what drives model performance. As one AI researcher noted, "Model performance can be more heavily influenced by reasoning architecture than by parameter size or training data alone." This observation aligns with broader industry trends toward structural optimization.

Recent advances support this pattern. Mistral AI's new speech model beats GPT-4o Mini with a 4% error rate, demonstrating that efficiency innovations can outperform larger models. Similarly, Qwen-LongL15 handles 4+ million tokens and matches GPT-5 on long context tests, showing how structural design reshapes capabilities.

Atom of Thought matters because it reduces error propagation in high-stakes applications like math reasoning, logic problem solving, and software code analysis. By isolating and validating reasoning components independently, models become more reliable without requiring expensive retraining cycles. This shift toward reasoning architecture over raw computational power may set new standards for how developers approach AI optimization in production environments.

Usman Salis

Usman Salis

Usman Salis

Usman Salis