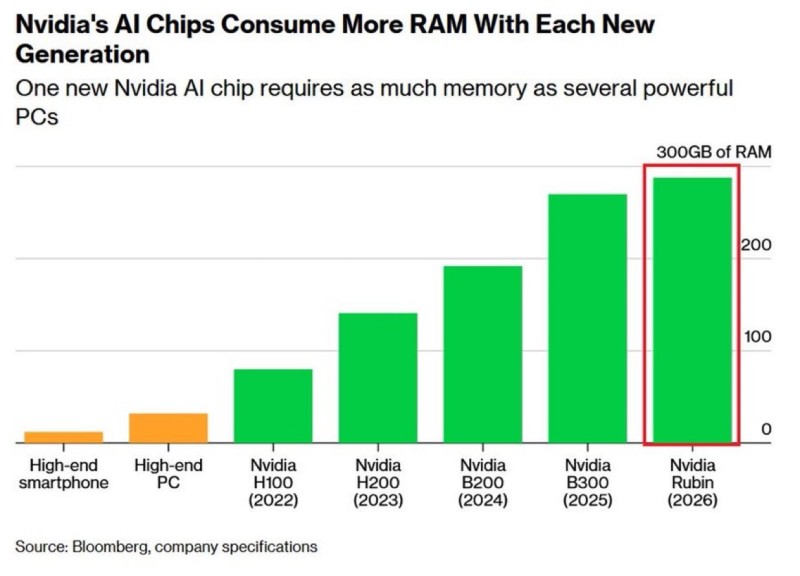

⬤ Nvidia is drawing attention across the semiconductor and AI infrastructure sectors as new data highlights the rapid growth in memory requirements for its AI accelerators. The upcoming Rubin chip may require roughly 300GB of memory, a dramatic jump from the 80GB used by the H100 GPU introduced in 2022. Each new generation of Nvidia AI hardware is demanding significantly larger memory capacity as artificial intelligence workloads continue to expand.

⬤ The progression of Nvidia's AI chips shows a clear upward trajectory in memory requirements. High-end smartphones and consumer PCs typically operate with only a fraction of the memory used in modern data center accelerators. Subsequent generations including the H200, B200, and B300 have steadily pushed memory levels higher, with the projected Rubin architecture potentially reaching around 300GB. Expected around 2026, Rubin is designed specifically for large-scale AI training and inference and is set to rely on next-generation HBM4 memory technology.

Memory capacity is becoming a central component of system performance and the single biggest constraint on where AI infrastructure goes next.

⬤ The rapid increase in memory capacity reflects the evolving demands of large language models and advanced AI reasoning systems. Training and running modern AI models requires massive datasets, long context windows, and extremely high data throughput. As hyperscale cloud providers deploy thousands of Nvidia GPUs across AI data centers, demand for DRAM and high-bandwidth memory continues to rise. This infrastructure expansion is reshaping the broader AI ecosystem, including large-scale initiatives like xAI Grok Code, which targets 1M H100 training scale, highlighting the enormous compute clusters needed for next-generation AI development.

⬤ The growing memory requirements of Nvidia hardware mark a structural shift in the global semiconductor landscape. Memory supply is increasingly discussed as a potential constraint on AI infrastructure expansion, especially as large data centers scale rapidly. Broader industry analysis, including Nvidia's new AI model for self-driving car reasoning and projections that AI data centers will outpace PJM grid capacity every year through 2030, underscores how AI compute is reshaping both the semiconductor market and global energy demand at an accelerating pace.

Victoria Bazir

Victoria Bazir

Victoria Bazir

Victoria Bazir