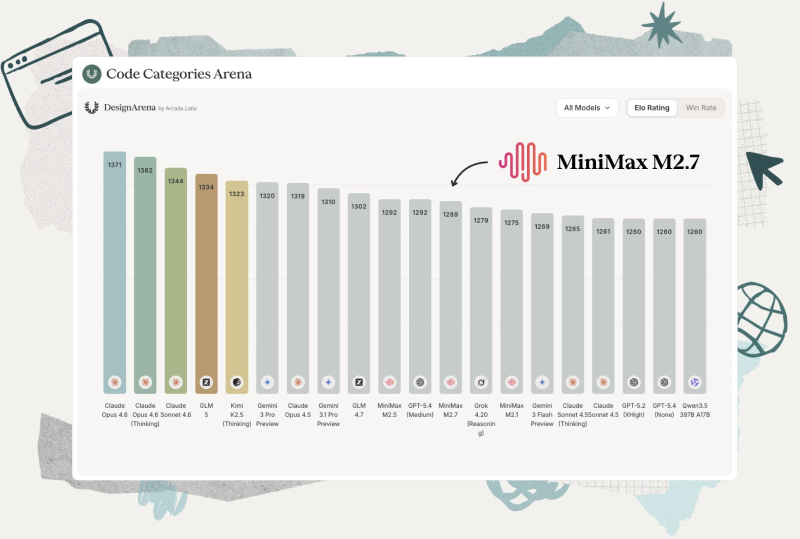

MiniMax has released its latest model, MiniMax M2.7, landing at position #12 on the Design Arena leaderboard. The debut places it in a competitive mid-tier cluster alongside several other capable systems, with an Elo score just below the 1,300+ threshold held by the current top performers. The ranking reflects progress in design-related tasks, though it signals iteration rather than a breakout shift in the broader landscape.

25+ Elo Gains in Game Development and 3D Design

According to leaderboard data, MiniMax M2.7 hits 56.2% on SWE-bench, and compared to M2.5, the new model gains over 25 Elo points in Game Development and more than 15 in 3D Design. These are targeted improvements in specialized categories rather than a sweeping overall climb. The dense grouping of models in this score range means even small gains matter, but they rarely produce dramatic leaderboard jumps.

Performance improvements do not always translate into major ranking changes, underscoring the tight margins between models in current benchmark ecosystems.

Where M2.7 Fits in the Evolving MiniMax Family

The release continues a steady pattern of version-to-version refinement. MiniMax M2.5 open-source challenges Claude Opus at 95% lower cost, setting a high bar on efficiency, while MiniMax M2.1 climbs into top 5 open-weight AI models showed the family's ability to compete at the upper tier. M2.7 fits into this arc as a focused upgrade, building on domain-specific strengths while the overall competitive field remains tight.

The Design Arena results reinforce a broader truth in current AI benchmarking: the gap between mid-tier models is narrowing, and meaningful differentiation increasingly comes from category-specific depth rather than general score dominance.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi