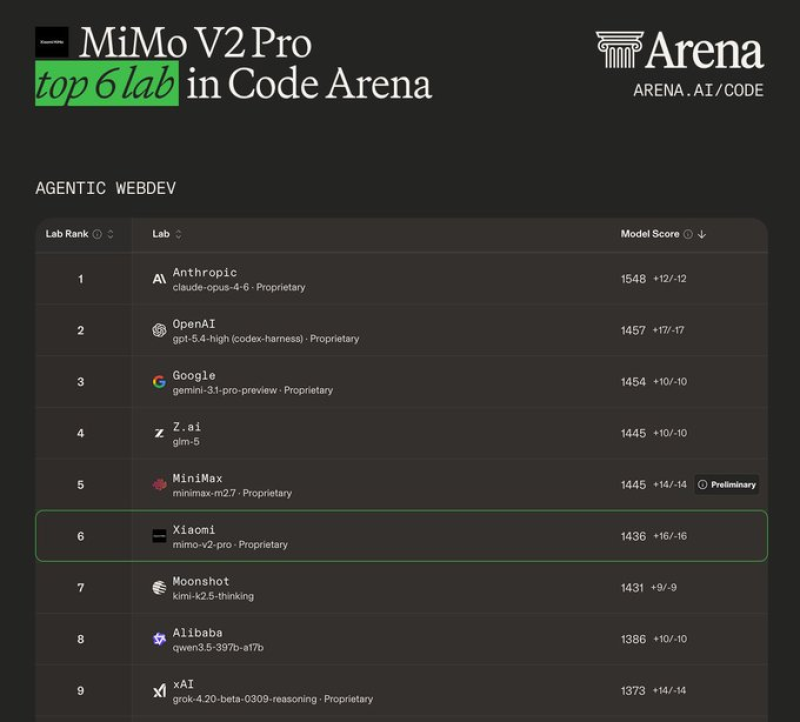

⬤ MiMo V2 Pro has landed among the top 6 labs in Code Arena for agentic web development tasks, posting a score of 1436. The leaderboard puts it at #6, behind systems from Anthropic, OpenAI, Google, Z.ai, and MiniMax. The model also secured a top 10 finish in Xiaomi MiMo token recharge system rollout Arena Expert evaluations, pointing to solid performance across a wider range of task categories.

⬤ The competitive gap between top models is narrow but telling. Anthropic's Claude leads at 1548, with OpenAI and Google both clearing 1450. MiMo V2 Pro at 1436 sits comfortably ahead of entries from Moonshot, Alibaba, and xAI. The result tracks with Xiaomi's wider push into AI infrastructure as the company begins building out an ecosystem around the model.

MiMo V2 Pro ranked in the top 6 for Code Arena, top 10 for Arena Expert, and top 20 across multiple occupational categories.

⬤ The results go beyond code. MiMo V2 Pro placed inside the top 20 across Life, Physical, and Social Sciences as well as Business, Management, and Financial Operations. These rankings signal real-world applicability well beyond engineering tasks. Comparable momentum is building elsewhere in the sector, with Grok 4.20 beta ranking in Search Arena also climbing the leaderboard charts.

⬤ Benchmark positions are increasingly treated as a hard measure of progress in the industry. MiMo V2 Pro's entry into the top 6 reflects how quickly the competitive picture is shifting, with newer models challenging proprietary leaders across both specialized and general-purpose evaluations.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi