Meta has introduced SAM 3.1, a drop-in update to its Segment Anything Model that is already making waves in real-time video AI. AI at Meta announced the release, highlighting a core new feature called object multiplexing that lets the system handle multiple segmentation targets simultaneously without the accuracy trade-offs you might expect. The update arrives as the broader AI industry shifts its focus toward efficiency - a trend also visible in IBM's agent memory system boosting task success by 149%.

SAM 3.1 benchmark results: 3x faster at 16 objects on H100

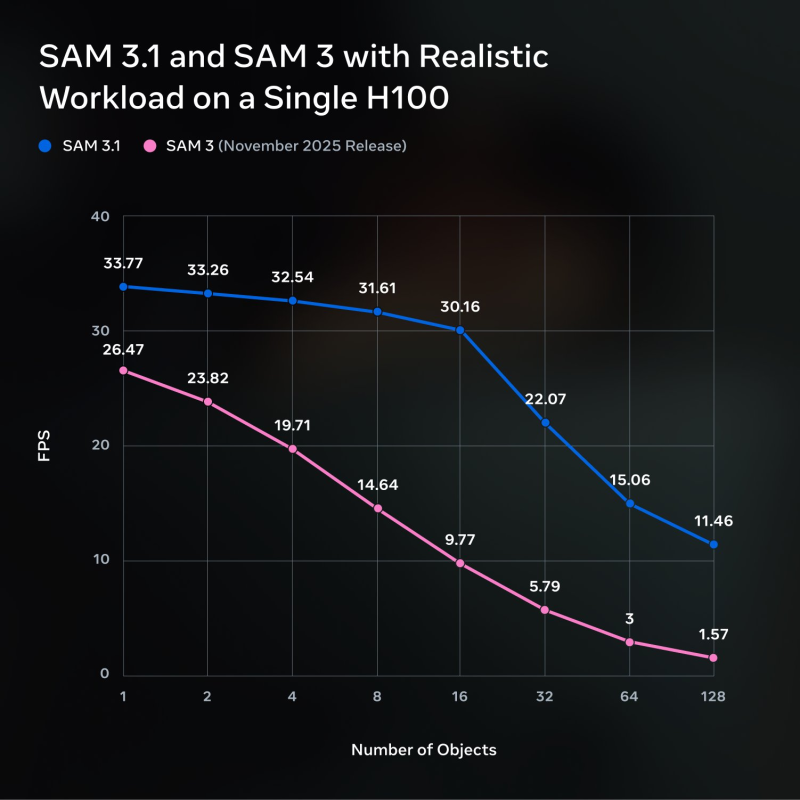

The benchmark numbers tell a clear story. Running on a single H100 GPU under realistic workloads, SAM 3.1 starts at roughly 33.77 FPS with a single object versus 26.47 FPS for SAM 3. That gap becomes dramatic as complexity scales.

At 128 simultaneous objects, SAM 3 struggles to stay above 1.57 FPS while SAM 3.1 holds at 11.46 FPS. That is not a marginal gain - it represents the kind of leap that makes production deployment at scale actually viable.

At 128 objects, SAM 3.1 sustains over 11 frames per second - roughly 7 times the throughput of its predecessor under the same conditions.

This kind of scalability improvement is in line with other recent milestones, such as Helios 14B reaching 195 FPS on a single H100.

How object multiplexing boosts SAM 3.1 video efficiency

The engine behind this jump is object multiplexing. Rather than processing each segmentation target in sequence, SAM 3.1 groups them into a unified pipeline pass. The result is less redundant computation and significantly better GPU utilization under heavy loads.

- Single pipeline processes multiple segmentation targets simultaneously

- Reduced redundant computation per object at scale

- Higher GPU utilization without changing hardware requirements

- Drop-in compatibility with existing SAM 3 deployments

The improved efficiency reflects ongoing optimization efforts in AI infrastructure - performance gains are increasingly tied to smarter resource utilization rather than raw computational power.

What SAM 3.1 means for real-world video AI applications

What makes SAM 3.1 notable beyond the benchmark sheet is the direction it points to. The update demonstrates that architectural choices - not just more expensive hardware - can meaningfully expand what is practical for real-world video applications. This approach mirrors the logic behind FlashAttention-4's 2.7x speed gains and 71% GPU utilization on Nvidia Blackwell - smarter memory access and operation batching, not raw hardware upgrades.

As GPU costs remain a bottleneck for many teams, efficiency-first design like this is becoming one of the more consequential trends in applied AI.

Saad Ullah

Saad Ullah

Saad Ullah

Saad Ullah