⬤ OpenAI has released GPT-5.4 mini and nano, two lower-cost variants of its flagship GPT-5.4 model, as reported by Artificial Analysis. Both models retain multimodal capabilities, including image input support and a 400K token context window, while cutting pricing significantly compared to GPT-5.4. The launch comes as AAPL and other tech giants push deeper into AI integration, alongside benchmark milestones like OpenAI GPT-5.4 mini hitting 72.1 on OSWorld.

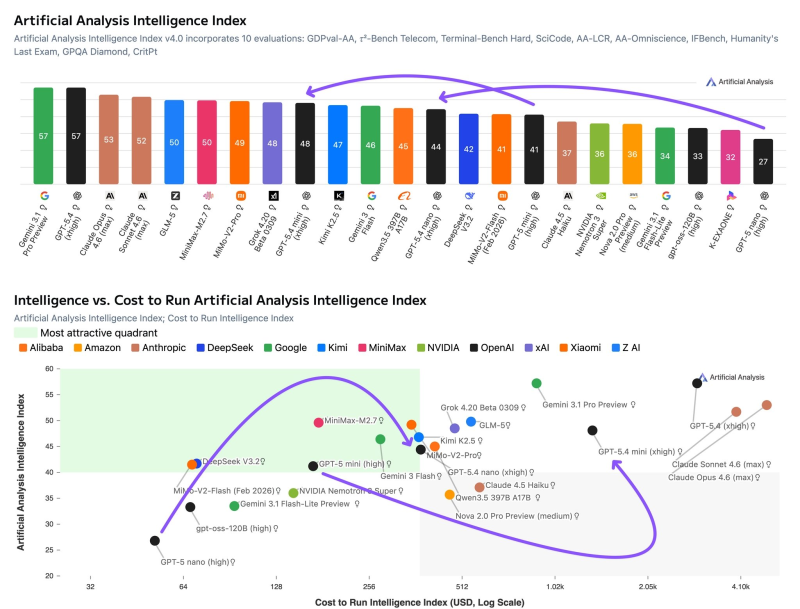

⬤ Benchmark results show strong reasoning gains, especially at the highest "xhigh" level. GPT-5.4 nano scored 44 - up from GPT-5 nano's 27 - outperforming lightweight rivals like Claude Haiku 4.5 and Gemini 3.1 Flash-Lite Preview across tau-Bench, IFBench, and TerminalBench. GPT-5.4 mini reached 48, climbing from 41 and holding its own against larger models like Gemini 3 Flash Preview and Claude Sonnet 4.6 in select tasks.

⬤ Still, both models come with limitations. Hallucination rates run higher than some competitors, particularly in AA-Omniscience benchmarks, where a tendency to answer rather than abstain hurt scores. Token usage is also elevated - GPT-5.4 mini consumed over 235 million output tokens during evaluation, while nano exceeded 210 million, both well above competing models. This reflects a trade-off between reasoning depth and efficiency, a theme also seen in OpenAI's GPT-5 October safety update for long chats.

⬤ Cost-performance analysis still favors GPT-5.4 nano. Despite heavier token usage, its lower price point delivers a stronger Intelligence-to-Cost ratio than peers like Claude Haiku 4.5 and Gemini 3.1 Flash-Lite Preview. As Google open-sources ADK for always-on AI agents and the broader market matures, these dynamics will likely shape how companies choose and deploy next-gen AI models in production.

Usman Salis

Usman Salis

Usman Salis

Usman Salis