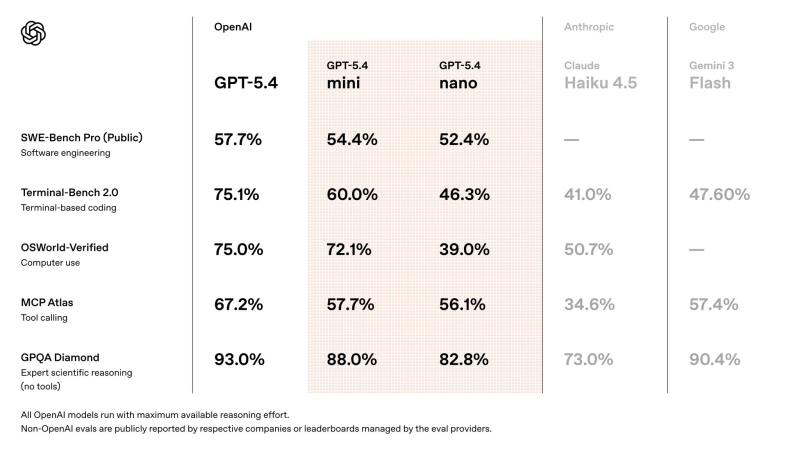

⬤ OpenAI's GPT-5.4 model family is out, and the mini variant is already making noise. Focused on speed and cost efficiency, GPT-5.4 mini delivers results that rival much heavier systems. It scores 54.4% on SWE-Bench Pro and 60.0% on Terminal-Bench 2.0, sitting just behind the flagship GPT-5.4. The release signals a wider shift: smaller models are becoming capable enough to handle serious workloads.

⬤ The competitive picture is telling. GPT-5.4 mini achieves 72.1% on OSWorld-Verified, clearly ahead of Anthropic's Claude Haiku 4.5 at 50.7%. On tool-calling benchmarks, it holds its ground with 57.7% on MCP Atlas, while the full GPT-5.4 leads the field with 75.1% on Terminal-Bench and 93.0% on GPQA Diamond, confirming its position as a top-tier reasoning model.

⬤ Even GPT-5.4 nano, the lightest model in the family, punches above its weight with 52.4% on SWE-Bench Pro and 82.8% on GPQA Diamond. The gap between compact and large models is narrowing fast. OpenAI's focus on resource-efficient architecture is paying off, with newer models designed to match larger systems while cutting computational costs.

⬤ The broader takeaway is a reshaping of the AI market. Efficiency-driven models are no longer just budget options; they are becoming serious contenders for real-world deployments. As GPT-5.4 mini's benchmark numbers show, performance and practicality are converging, accelerating competition among major AI providers and changing how teams integrate AI into their daily workflows.

Peter Smith

Peter Smith

Peter Smith

Peter Smith