⬤Nvidia has unveiled Nemotron-Cascade-2-30B-A3B, a new reasoning-focused AI model that achieves gold medal performance across top-tier academic competitions. Results cover IMO 2025, IOI 2025, and ICPC World Finals 2025, placing it among the most capable compact models available today.

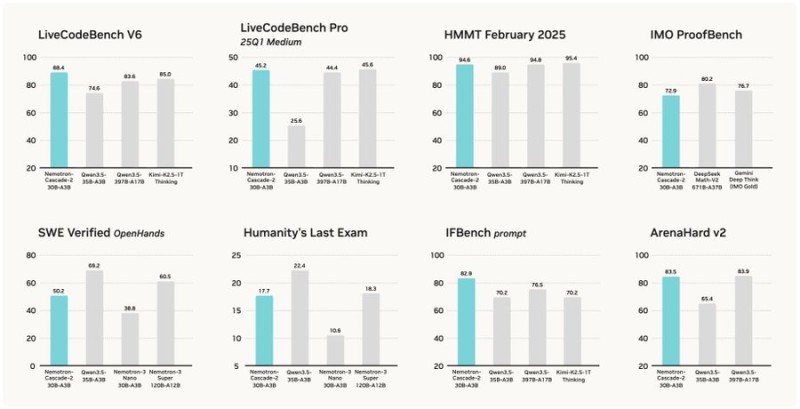

⬤Benchmark scores are hard to dismiss. Nemotron-Cascade-2 reaches 88.4 on LiveCodeBench V6 and 94.6 on HMMT February 2025, with competitive results across ArenaHard v2, IFBench, and SWE Verified. The numbers reflect consistent strength in reasoning, coding, and instruction-following despite the model's relatively lean footprint.

⬤The architecture is a 30B parameter Mixture-of-Experts system with roughly 3B active parameters per token. Its post-training pipeline combines supervised fine-tuning, Cascade reinforcement learning, and multi-domain on-policy distillation. The result is a model that outperforms its predecessor and some larger rivals on key benchmarks while staying computationally efficient.

⬤Context matters here. Nvidia's wider Nemotron lineup is gaining ground fast. Nemotron-3 Super delivers 48.4 tokens/sec with multi-token prediction, while Nemotron-3 Nano on Amazon Bedrock extends the family into enterprise cloud infrastructure. Cascade-2 is the latest, sharpest edge of that push.

⬤Benchmark leadership is increasingly how AI models stake their claim in the market. With Nemotron-Cascade-2, Nvidia is not just competing in performance tables but reinforcing its full-stack AI positioning. For investors watching NVDA, the move signals that Nvidia's model development arm is keeping pace with the broader industry push toward efficient, high-reasoning systems.

Peter Smith

Peter Smith

Peter Smith

Peter Smith