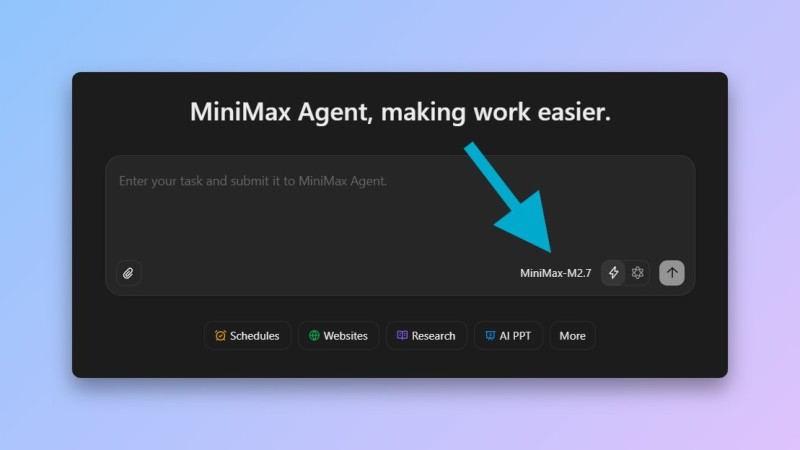

⬤ MiniMax has officially released its latest model, M2.7, adding new capabilities to its AI agent ecosystem. The company frames the launch as "the beginning of recursive self-improvement" - a clear signal that future iterations will refine themselves over time. The model is integrated directly into the MiniMax Agent environment, where users can run complex workflows and submit multi-step tasks through a unified interface.

⬤ M2.7 supports a context window of up to 200,000 tokens, putting it in the same range as top-tier models built for long-horizon reasoning. A dedicated "highspeed" variant is also available for faster inference and real-time execution. On the SWE-Pro benchmark, the model scored 56.22%, reflecting meaningful progress in coding and software engineering tasks that matter for developer-focused deployments.

⬤This release fits into a broader shift in the AI industry toward agent-based systems capable of handling research, automation, and coding inside a single interface. MiniMax has been scaling fast - earlier releases already drew attention for competitive pricing and raw throughput. MiniMax M2.5 Open Source Challenges Claude Opus at 95% Lower Cost and 3x Speed showed how aggressively the company targets cost efficiency, while MiniMax M2.5 Tops Token Chart With 3.07 Trillion Generated confirmed the scale it has already reached.

⬤ With M2.7, the competitive pressure around context size, inference speed, and benchmark rankings only intensifies. As agent pipelines become the primary deployment model across industries, these technical metrics are no longer just marketing - they are the criteria developers use to choose tools. MiniMax is clearly positioning itself as a serious contender in that race.

Usman Salis

Usman Salis

Usman Salis

Usman Salis