Self-improving AI has moved from theory to benchmark results. MiniMax has introduced M2.7, a model that iteratively upgrades its own performance without human intervention, completing 22 machine learning competitions and posting numbers that rival some of the most capable systems available today.

How M2.7 Teaches Itself: The Self-Optimization Loop

The architecture behind M2.7 is built around three components working in a continuous cycle: short-term memory, self-feedback, and self-optimization. After each iteration, the model writes internal evaluations and stores the resulting insights, which then shape the next training round. All of this ran under constrained hardware conditions on a single A30 GPU, covering a broad range of machine learning workflows. The approach follows a lineage of strong results for MiniMax, as MiniMax M2.5 scored 80.2% on SWE-Bench Verified, establishing the company as a serious player in agent-based AI development.

9 Gold Medals, 66.6% Average Rate: M2.7 Performance by the Numbers

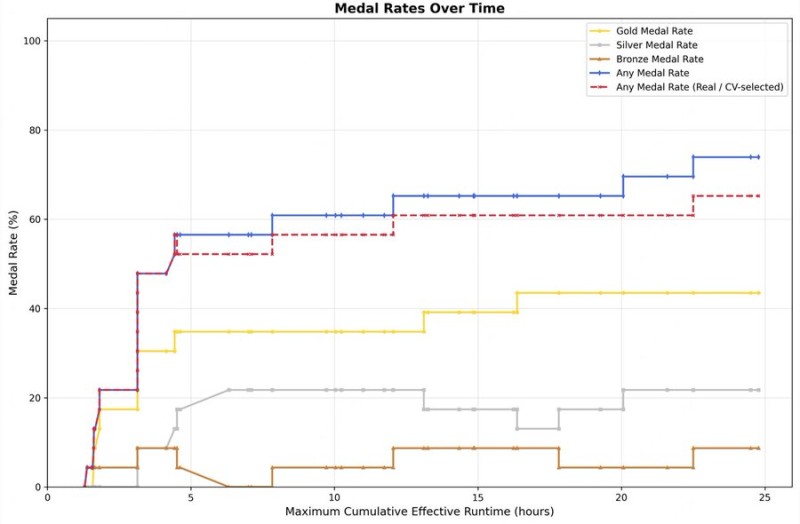

The results across three runs tell a consistent story of improvement over time. The best single run produced 9 gold medals, 5 silver medals, and 1 bronze medal. The average medal rate across all runs settled at 66.6%, placing M2.7 in close proximity to GPT-5.4 and competitive with Gemini-3.1. Performance charts show a clear upward trajectory as runtime extends, validating the model's ability to optimize itself through repeated cycles rather than plateau early. This trajectory mirrors the efficiency gains already demonstrated in the broader MiniMax lineup: MiniMax M2.5 challenged Claude Opus at 95% lower cost and 3x speed, a benchmark that reframed what competitive AI performance actually costs.

The broader implication of M2.7 is a shift in how training pipelines are designed. Data construction, evaluation, and optimization are now tasks the model handles independently, pointing toward autonomous systems that manage their own development cycles. As efficiency and cost become primary competitive factors across the AI landscape, self-evolving architectures like M2.7 represent a structural change in how capable models get built, not just improved.

Peter Smith

Peter Smith

Peter Smith

Peter Smith