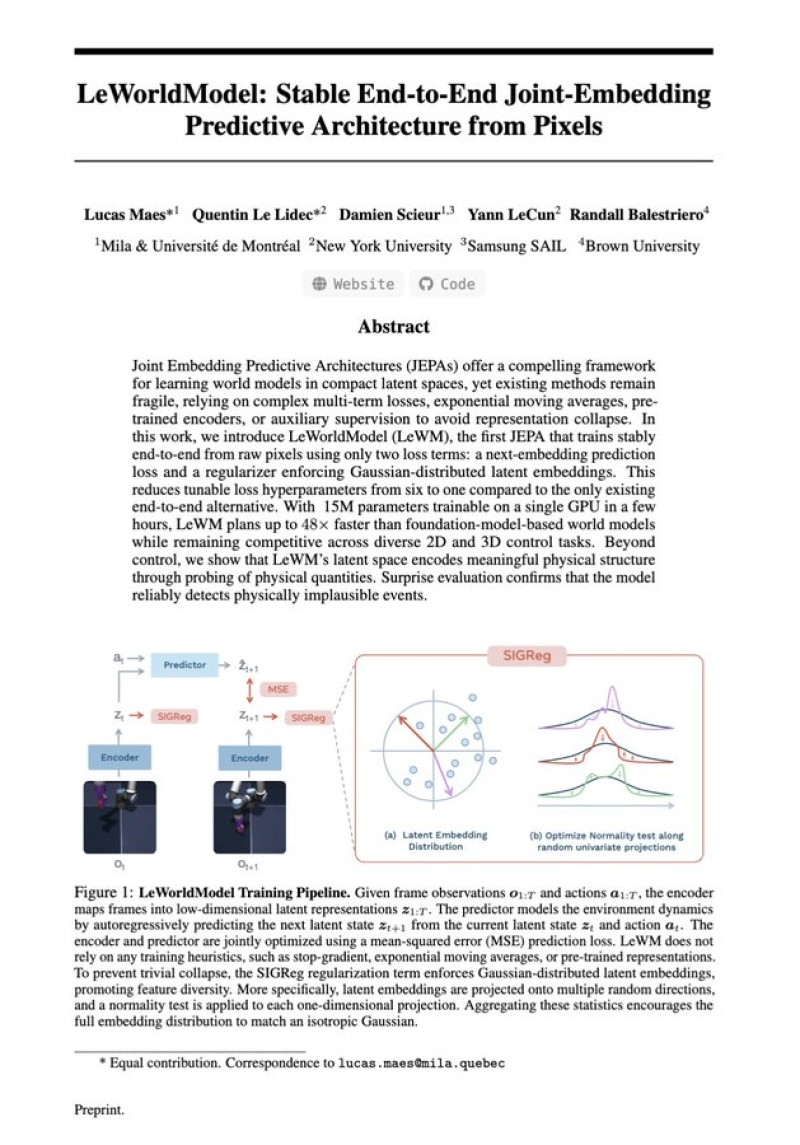

⬤Yann LeCun's team introduced LeWorldModel, a compact end-to-end joint-embedding predictive architecture trained directly from pixels. Unlike traditional JEPA systems that require multiple stabilization techniques, this model removes the need for training heuristics entirely, enabling direct learning from raw visual inputs with no auxiliary dependencies.

⬤Operating at roughly 15 million parameters, LeWorldModel is lean by modern standards. It avoids exponential moving averages, auxiliary losses, and pre-trained encoders that most architectures depend on for stability. Yann LeCun warns against overestimating LLM intelligence in a broader context where alternative architectures are gaining ground, and this model fits that argument directly.

⬤The headline result is a 48x faster training speed compared to similar approaches. A new regularization method enforces structured latent embeddings and prevents representation collapse without extra tricks. This dramatically cuts computational overhead and points toward a more scalable path in AI development.

⬤LeWorldModel reflects LeCun's long-standing push for systems that model and predict real-world dynamics rather than scaling text-based reasoning. Alongside the debate captured in LeCun slams humanoid AI hype, calls for world models, this work signals that efficiency and architectural clarity may shape the next phase of AI progress more than raw parameter count.

Usman Salis

Usman Salis

Usman Salis

Usman Salis