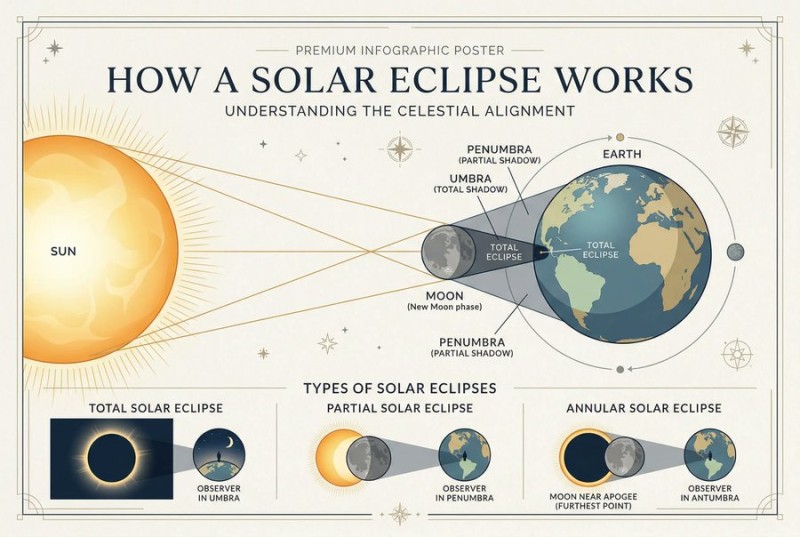

⬤ Google's advancements with its Nano Banana 2 model are gaining traction in the AI community after users reported exceptionally fast and strong visual results using the engine inside OpenArt. According to commentary accompanying shared examples, the model generated complex graphic layouts in a timeframe and quality level that testers described as "ridiculously fast and annoyingly good." The attached infographic-style image demonstrates structured elements and clear rendering consistency, lending visual context to these claims.

⬤ The development matters because traditional text-to-image models have often faced a compromise between generation speed and output quality. According to an AIGazine report, Google's Nano Banana 2 claims a #1 text-to-image spot with an ELO score of 1272 at half the cost of prior models, suggesting improved performance efficiency that could make AI imaging more accessible and practical across professional use cases.

⬤ While model benchmarks and cost metrics are important, the broader ecosystem reaction highlights two key trends: the emphasis on throughput and cost-efficient scaling. Other recent research shows that performance can vary significantly depending on deployment context. For example, throughput tests on Google's Vertex platform showed a significant drop in Gemini 3.1 Pro performance from 46 to 50 TPS, illustrating how model choice and architecture interact with cloud infrastructure.

⬤ The reception of Nano Banana 2's combination of speed and quality matters because it could shift how creative professionals integrate generative imaging into their workflows. As Google and other providers refine where latency, quality, and cost intersect, these models may increasingly blur lines between experimental tools and scalable everyday solutions.

Peter Smith

Peter Smith

Peter Smith

Peter Smith